DOWNLOAD THE ENTIRE JULY 2017 NEWSLETTER including this month’s Freebie.

Laughter is universal.

It is a hardwired response that comes online early— in the first four months of life— regardless of culture or native language. Whether a child is raised in The Netherlands or Nigeria, Peru or Pakistan, his or her first laugh will delight her parents at about 14 to 18 weeks of age. A baby’s laugh is easily recognisable, partly because of its genuineness. Like crying, it is hard to fake and, like yawning, is contagious. Its authentic quality makes it hard for parents to ignore. Scientists, on the other hand, have only recently caught on to its significance.

Of course, laughter is not exclusively an expression of amusement. In adults, it can occur in many emotional contexts, including when people are nervous, as a response to others’ laughter or more simply when in the company of other people. But why do infants laugh? It is not so much a question of what they find funny. There is no universal joke for infants. Instead we must consider how infants extract humour from their environment.

In contrast to crying, which clearly urges an infant’s caregiver into action, laughter seems like an emotional luxury. The fact that a three-month-old can have access to this ability— long before other major milestones such as talking and walking—suggests that her chortles, sniggers and guffaws have an ancient and important origin. Laughter can reveal a considerable amount about infants’ understanding of the physical and social world.

Baby Darwin

Laughter precedes language both in infancy and in the evolutionary chain, having been prioritised and preserved by nature. Indeed, several species, including chimpanzees, other apes and squirrel monkeys, engage in vocalisations during play that resemble laughter. These mammals— especially juveniles—display signature breathy and rhythmic sounds while frolicking together.

Evolutionary neuropsychologist Jaak Panksepp has shown that the brains of all animals contain the neural circuitry engaged in human laughter. These areas include emotional and memory centres, such as the amygdala and hippocampus. Laughter seems to bubble up from below the surface of the cortex as an involuntary response while activating the pleasure systems in the brain. Famously, Panksepp has even documented (by using technologies that allow humans to hear very high frequencies) that rats emit a rhythmic chirping sound when “tickled.”

Evolutionary neuropsychologist Jaak Panksepp has shown that the brains of all animals contain the neural circuitry engaged in human laughter. These areas include emotional and memory centres, such as the amygdala and hippocampus. Laughter seems to bubble up from below the surface of the cortex as an involuntary response while activating the pleasure systems in the brain. Famously, Panksepp has even documented (by using technologies that allow humans to hear very high frequencies) that rats emit a rhythmic chirping sound when “tickled.”

In humans, infant laughter has gained the attention of a few prominent scholars. In the fourth century B.C., Aristotle posited that the first laugh marked an infant’s transition to humanness and served as primary evidence of that infant having acquired a soul. In 1872 Charles Darwin hypothesised that laughter, like other postural, facial and behavioural expressions of emotion, served as a social signal of “mere happiness or joy.” In his landmark volume, The Expression of the Emotions in Man and Animals, Darwin meticulously described the laughter of his own infant son, writing: “At the age of 113 days these little noises, which were always made during expiration, assumed a slightly different character, and were more broken or interrupted, as in sobbing; and this was certainly incipient laughter.”

Psychology, however, neglected the topic for decades. For most of its history, the discipline has primarily focused on negative emotions such as anger, depression, anxiety and major mental illness. This trend started to change about 40 years ago, when some psychologists began studying resilience to adversity, happiness and the psychology of well-being. A whole new subfield known as positive psychology was born.

Furthermore, it is only within the past 30 years that developmental psychologists have had methodologies for making inferences about infant cognition and emotion. One such method, the “gaze paradigm,” involves timing the duration of an infant’s stare. Several studies have demonstrated that babies will gaze longer at a novel object, which at its most basic level reveals that they can differentiate it from a familiar one.

In 1985 psychologists Elizabeth Spelke and Renée Baillargeon used the gaze paradigm to study infants’ conceptual knowledge. Spelke and Baillargeon began presenting infants with possible and impossible scenarios—for example, one object, in keeping with natural laws, would not penetrate a solid barrier, but a second, similar object would appear to do so. They found that babies gazed longer at unexpected events. These findings led researchers to deduce that infants come equipped with some simple expectations about how objects behave, which, when violated, results in their rapt attention. Such violations, it turns out, are powerful catalysts for humour.

Funny Business

Stand-up comedians often exploit expectations to make audiences laugh. They build suspense and push the boundaries of norms and acceptability to provoke our laughter, whether with puns, jokes or witty retorts. For something to be funny, the person telling a joke and the person hearing it need some common knowledge. Humour therefore requires at least some rudimentary understanding of the physical and social world. This understanding can be based on experience and observation, which provide the foundation for what is “ordinary.” With that baseline, we can differentiate the ordinary from the absurd.

Stand-up comedians often exploit expectations to make audiences laugh. They build suspense and push the boundaries of norms and acceptability to provoke our laughter, whether with puns, jokes or witty retorts. For something to be funny, the person telling a joke and the person hearing it need some common knowledge. Humour therefore requires at least some rudimentary understanding of the physical and social world. This understanding can be based on experience and observation, which provide the foundation for what is “ordinary.” With that baseline, we can differentiate the ordinary from the absurd.

Research from Gina Mireault’s lab shows that infants as young as five months, just a month after laughter comes online, can independently manage this basic perceptual difference. In 2014 she and her colleagues published findings from an experiment in which they presented 30 infants with ordinary and absurd events. For example, an experimenter might squish and roll a red foam ball as an ordinary scenario, then wear it as a nose in an absurd iteration of that event. Not only did infants distinguish between the two, they laughed at the latter. The key finding was that their laughter was not made in imitation; it occurred even when the experimenter and infants’ parents were instructed to remain emotionally neutral.

Just a few months later, at about eight months of age, infants can be effective comedians and understand how to make others laugh without using any words. Psychologist Vasudevi Reddy calls this nonverbal form of humour “clowning.” She has documented babies from eight to 12 months engaged in numerous forms of clowning—for example, exposing their naked tummy while shaking back and forth, attempting to put their toes in a caregiver’s mouth while laying supine, or snatching a clean diaper and feigning disgust, followed by a smile.

Infants this age also engage in teasing, such as smiling coyly as they intentionally disobey a parent’s directive not to climb the stairs or offering the dog a biscuit, only to snatch it quickly back with a cheeky grin. Such “fake outs” have been reported even earlier by parents of six-month-olds, at which point infants can employ fake laughter (or tears) to draw attention to themselves or be included in an interaction that others are enjoying without them. Recall that laughter is difficult to fake, so these displays are easily detected.

Most important, infants create these novel interactions. They decide when and with whom to employ these techniques. As such, these types of playful, teasing exchanges can give us a window into infants’ awareness. Teasing in particular requires at least a rudimentary understanding of others’ minds, a desire to engage, and a guess or prediction as to how to provoke the mind of someone else. To trick someone else means to know that someone else can, in fact, be tricked. This knowledge, referred to as a theory of mind, is a mature insight that has traditionally been credited only to children who are at least four years old. Although infants do not have the mind theory sophistication of older children, their ability to effectively tease and provoke others suggests they have at least some level of awareness.

Most important, infants create these novel interactions. They decide when and with whom to employ these techniques. As such, these types of playful, teasing exchanges can give us a window into infants’ awareness. Teasing in particular requires at least a rudimentary understanding of others’ minds, a desire to engage, and a guess or prediction as to how to provoke the mind of someone else. To trick someone else means to know that someone else can, in fact, be tricked. This knowledge, referred to as a theory of mind, is a mature insight that has traditionally been credited only to children who are at least four years old. Although infants do not have the mind theory sophistication of older children, their ability to effectively tease and provoke others suggests they have at least some level of awareness.

Great Expectations

Clowning and teasing reflect the primarily social nature of humour, but for something to make us laugh aloud in amusement, we need more than just the presence of other people. After all, infants spend most of their time with others, though little of their time laughing. This is because humour—whether for adults or infants—also requires a cognitive component: incongruity.

Incongruity refers to a situation that psychologist Elena describes as misexpected, meaning it creates a misalignment between what the infant expects and what she or he experiences. Misexpected events are slightly out of the ordinary. In contrast, truly unexpected happenings are completely shocking or surprising— and, as such, can be perceived as more disturbing or amazing than humourous. For example, when a cup is worn as a hat, it does not match the infant’s prior experience with cups (or with hats). If the cup transformed into an antelope, the situation would be totally unexpected.

Adults, children and infants alike find unexpected events interesting but not necessarily funny. Multiple explanations arise from the research employing the violation of expectation paradigm. When infants are presented with violations of natural physical laws— such as gravity, solidity, inertia or quantity— they stare at these “magical” events, but they do not laugh. If we contextualise Hoicka’s ideas into the larger research on infant gaze and interest, we can speculate that perhaps humour relates to misexpectations of social behaviour. A toy flying through space and defying gravity is cause for wonderment. But Grandma wearing that toy on her head? Absolutely hilarious.

Humour theorists present one possible explanation through a phenomenon called incongruity resolution. To perceive an incongruity as humorous requires that the incongruity be resolved, which means understanding its cause or getting to the “punch line.” The aha moment at which a listener decodes the nuance or double entendre of a verbal joke, for example, is the moment of resolution. It is the point at which the incongruous nature of why “a guy walks into a bar” becomes humorous, whether or not it is accompanied by overt laughter.

Forty years ago many cognitive psychologists argued that infants were not sophisticated enough to resolve incongruity. Psychologists Diana Pien and Mary Rothbart proposed that humour perception does not necessarily require advanced cognitive skills. In a study published in 2012 Gina Mireault put that idea to the test.

Mireault’s group asked 30 parents to “do whatever you normally do to get your baby to laugh or smile,” they resorted to wildly exaggerated “clowning.” Blowing raspberries, making odd faces and walking like a penguin. They are major permutations of ordinary daily interactions. At the very least, such behaviour gets a baby’s attention. Starting when the children were three and four months of age, the researchers tracked these families through their first year and found that 40 percent of the youngest children laughed in response to their parents’ antics; by five and six months, 60 percent of the infants laughed.

Infants need not do much to resolve these misexpectations to find them funny. In fact, there are at least three clues available to them. Social context is one example: these absurd acts are performed by a social partner, which may be enough to bias the infant toward interpreting the behaviour as positive. Parents typically pair clowning with their own smiling or laughing about 65 percent of the time. This combination signals that the antics are safe, satisfying and joyful.

A second factor is familiarity. Social partners often repeat silly actions over and over again until the infant laughs and then because she or he has laughed. It is possible that the caregiver’s repetition allows the infant to either predict the action and its outcome— a resolution in itself— or infer the intentionality of the act. That Dad is balancing a spoon on his nose is not an accident if he repeats the act several times. Psychologist Amanda Woodward has shown that, by their first birthdays, infants can infer intention from others’ actions and speech.

A third element that may help babies differentiate between magical and humourous incongruities is that the latter are possible. Ultimately there is nothing magical about Mum wearing a cup as a hat. The nonmagical nature of humourous events may move infants, as well as children and adults, beyond that initial state of wonder to a final state of humour.

Whatever their strategy, experimental evidence shows that although infants begin to laugh at humourous events at about five months of age, they can detect such activities even earlier. Four-month-olds in this study gazed at humourous events with intense interest, registering a significant heart rate deceleration. This physiological response is exhibited when they display the same interest in a stimulus, as well as when they smile.

Psychologist Stephen Porges proposes that heart rate deceleration does not necessarily reflect joy so much as prime the infant for it. When babies are confronted with something novel, they stare at it, a response that is accompanied by a heart rate deceleration. Porges suggests that this physiological calm acts as a kind of resource, allowing the infant to remain oriented toward a novel and nonthreatening stimulus. When this reaction is combined with their bias toward sociability, young infants may benefit from this calming response by finding pleasure in absurdity.

All Together Now

Gina Mireault’s work suggests that infants truly can perceive and create humour. But not all laughter relates to amusement. Although there is no evidence of infants laughing in discomfort, we know that adults can and do laugh without mirth. That observation may provide insight into its deeper purpose.

No matter how it is deployed, laughter is social. Robert Kraut and the late Robert Johnston ushered in the field of evolutionary psychology with a landmark 1979 study demonstrating that—among other things—bowlers were more likely to smile not after achieving a strike but after facing the audience following a strike. Psychologist Robert Provine found that laughter is 30 times more likely to occur in the company of other people, regardless of whether anything amusing is happening. Provine’s research shows that laughter usually follows banal comments such as, “I better be going!” or “Great to see you!” rather than comedic punch lines. In addition, people can be amused and not laugh at all.

For youngsters at play, laughter seems to signal both positive emotion and affiliation with one another. Evolutionary psychologists Robin Dunbar and Guillaume Dezecache have proposed that laughter keeps us connected and in harmony as adults when we have long given up rough-and-tumble romps. This idea is especially supported by the contagious quality of laughter in groups of people, including strangers.

Laughter, therefore, serves as a kind of social glue, with many possible meanings. Someone’s nervous giggle may prompt peers to provide comfort or assurance, and a mischievous chuckle can signal when roughhousing is meant purely in jest. Hoicka has described what she calls a “humourous frame,” in which social partners can interact in such a way that both actors interpret an interaction – such as teasing – as positive. Indeed, four- to six-month-old infants are poised for positive emotion. Not yet wary of strangers or of separation from primary caregivers, infants are ready for interaction with anyone, increasing their opportunities for play, smiling and laughter at just the moment when that new response is available to them. From an evolutionary perspective, this joint emergence of laughter and sociability is wise.

Laughter— it turns out— has a serious side. Its value as a social signal and mammalian superglue explains why it comes “factory-installed” as part of infants’ native hardware. At four months of age, infants’ laughter most likely is neurologically jump-started by their intense attention toward novelty and the salience of the broad social context. But within one month, babies have enough cognitive sophistication to detect and interpret new, nonthreatening social events as funny, all by themselves. A few months later they can produce such events, too, much to the joy of everyone.

REFERENCES

■ Infant Clowns: The Interpersonal Creation of Humour in Infancy. Vasudevi Reddy in Enfance, Vol. 53, No. 3, pages 247–256; 2001.

■ Laughing Matters. Gina C. Mireault in Scientific American Mind, Vol. 28, No. 3, May/June, pages 44-49; 2017.

■ How Infants Know Minds. Vasudevi Reddy. Harvard university Press, 2008.

■ Humor in Infants: Developmental and Psychological Perspectives. Gina C. Mireault and Vasudevi Reddy. Springer, 2016.

ogist Yannick Stephan of the University of Montpellier in France reached this conclusion after combining data from two large, survey-based studies. The Wisconsin Longitudinal Study (WLS) followed people who had graduated from that state’s high schools in 1957, as well as some of their brothers and sisters. The Midlife in the United States (MIDUS) study recruited people from across the country. Participants in both had completed personality questionnaires when first recruited in the 1990s and answered questions about their exercise habits and health.

ogist Yannick Stephan of the University of Montpellier in France reached this conclusion after combining data from two large, survey-based studies. The Wisconsin Longitudinal Study (WLS) followed people who had graduated from that state’s high schools in 1957, as well as some of their brothers and sisters. The Midlife in the United States (MIDUS) study recruited people from across the country. Participants in both had completed personality questionnaires when first recruited in the 1990s and answered questions about their exercise habits and health.

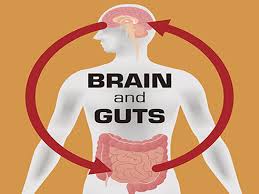

ctors have noted that constipation is one of the most common symptoms of Parkinson’s, appearing in approximately half the individuals diagnosed with the condition and often preceding the onset of movement-related impairments. Still, for many decades, the research into the disease has focused on the brain. Scientists initially concentrated on the loss of neurons producing dopamine, a molecule involved in many functions including movement. More recently, they have also focused on the aggregation of alpha synuclein, a protein that twists into an weird shape in Parkinson’s patients. A shift came in 2003, when Heiko Braak, a neuroanatomist at the University of Ulm in Germany, and his colleagues proposed that Parkinson’s may actually originate in the gut rather than the brain.

ctors have noted that constipation is one of the most common symptoms of Parkinson’s, appearing in approximately half the individuals diagnosed with the condition and often preceding the onset of movement-related impairments. Still, for many decades, the research into the disease has focused on the brain. Scientists initially concentrated on the loss of neurons producing dopamine, a molecule involved in many functions including movement. More recently, they have also focused on the aggregation of alpha synuclein, a protein that twists into an weird shape in Parkinson’s patients. A shift came in 2003, when Heiko Braak, a neuroanatomist at the University of Ulm in Germany, and his colleagues proposed that Parkinson’s may actually originate in the gut rather than the brain. the intestines drive neurodegeneration in the brain remains an active area of investigation. Some studies propose that aggregates of alpha synuclein move from the intestines to the brain through the vagus nerve. Others suggest that molecules such as bacterial breakdown products stimulate activity along this channel, or that that the gut influences the brain through other mechanisms, such as inflammation. Together, however, these findings add to the growing consensus that even if Parkinson’s is very much driven by brain abnormalities, it doesn’t mean that the process starts in the brain.

the intestines drive neurodegeneration in the brain remains an active area of investigation. Some studies propose that aggregates of alpha synuclein move from the intestines to the brain through the vagus nerve. Others suggest that molecules such as bacterial breakdown products stimulate activity along this channel, or that that the gut influences the brain through other mechanisms, such as inflammation. Together, however, these findings add to the growing consensus that even if Parkinson’s is very much driven by brain abnormalities, it doesn’t mean that the process starts in the brain. If alpha-synuclein does travel from the intestines to the brain, the question still arises: Why does the protein accumulate in the gut in the first place? One possibility is that alpha-synuclein produced in the gastrointestinal nervous system helps fight off pathogens. Last year, Michael Zasloff, a professor at Georgetown University, and his colleagues reported that the protein appeared in the guts of otherwise healthy children after norovirus infections, and that, at least in a lab dish, alpha-synuclein could attract and activate immune cells.

If alpha-synuclein does travel from the intestines to the brain, the question still arises: Why does the protein accumulate in the gut in the first place? One possibility is that alpha-synuclein produced in the gastrointestinal nervous system helps fight off pathogens. Last year, Michael Zasloff, a professor at Georgetown University, and his colleagues reported that the protein appeared in the guts of otherwise healthy children after norovirus infections, and that, at least in a lab dish, alpha-synuclein could attract and activate immune cells. Their analysis compared 144,018 individuals with Crohn’s or ulcerative colitis and 720,090 healthy controls. It revealed that the prevalence of Parkinson’s was 28 percent higher in individuals with the inflammatory bowel diseases than in those in the control group, supporting earlier findings from the same researchers that the two disorders share genetic links. In addition, the research team discovered that in people who received drugs used to reduce inflammation—tumour necrosis factor (TNF) inhibitors—the incidence of the neurodegenerative disease dropped 78 percent.

Their analysis compared 144,018 individuals with Crohn’s or ulcerative colitis and 720,090 healthy controls. It revealed that the prevalence of Parkinson’s was 28 percent higher in individuals with the inflammatory bowel diseases than in those in the control group, supporting earlier findings from the same researchers that the two disorders share genetic links. In addition, the research team discovered that in people who received drugs used to reduce inflammation—tumour necrosis factor (TNF) inhibitors—the incidence of the neurodegenerative disease dropped 78 percent. Because not all Parkinson’s patients will have inflammatory bowel disorders, findings from the investigations into the co-occurrence of the two conditions might not generalise to everyone with the neurodegenerative disease. Still, these studies and many others that have emerged in recent years support the idea that the gut is involved in Parkinson’s is correct. If this turns out to be true in the long run then it may allow researchers to devise treatments that target the gut instead of the brain.

Because not all Parkinson’s patients will have inflammatory bowel disorders, findings from the investigations into the co-occurrence of the two conditions might not generalise to everyone with the neurodegenerative disease. Still, these studies and many others that have emerged in recent years support the idea that the gut is involved in Parkinson’s is correct. If this turns out to be true in the long run then it may allow researchers to devise treatments that target the gut instead of the brain. early the gastrointestinal changes occur remains. In addition, other scientists have suggested that it is still possible that the disease begins elsewhere in the body. In fact, Braak and his colleagues also found Lewy bodies in the olfactory bulb, which led them to propose the nose as another potential place of initiation (Interestingly, from a natural medicine perspective, we’re taught that loss of the sense of smell is a very early warning sign for Parkinson’s). It could turn out to be that there are multiple sites of origin for Parkinson’s disease. For some people, it might be the gut, for others it might be the olfactory system—or it might just be something that occurs in the brain.

early the gastrointestinal changes occur remains. In addition, other scientists have suggested that it is still possible that the disease begins elsewhere in the body. In fact, Braak and his colleagues also found Lewy bodies in the olfactory bulb, which led them to propose the nose as another potential place of initiation (Interestingly, from a natural medicine perspective, we’re taught that loss of the sense of smell is a very early warning sign for Parkinson’s). It could turn out to be that there are multiple sites of origin for Parkinson’s disease. For some people, it might be the gut, for others it might be the olfactory system—or it might just be something that occurs in the brain.

Ketamine has been called the biggest thing to happen to psychiatry in 50 years. The notorious party drug may act as an antidepressant by blocking neural bursts in a little-understood brain region that may drive depression. It improves symptoms in as little as 30 minutes, compared with weeks or even months for existing antidepressants, and is effective even for the roughly one third of patients with so-called treatment-resistant depression.

Ketamine has been called the biggest thing to happen to psychiatry in 50 years. The notorious party drug may act as an antidepressant by blocking neural bursts in a little-understood brain region that may drive depression. It improves symptoms in as little as 30 minutes, compared with weeks or even months for existing antidepressants, and is effective even for the roughly one third of patients with so-called treatment-resistant depression. Both new studies probe the workings of the LHb, a small, central brain region wedged between the stalk of the pineal gland and the thalamus that acts like the dark twin of the brain’s reward centres by processing unexpectedly unpleasant events. For example, if an animal has been trained to expect food when reaching the end of a maze and the reward is not there, the LHb activates, signalling a discrepancy between expectation and outcome. This has led to the LHb being dubbed the key part of a “disappointment circuit.” If the LHb is overactive, it could suppress rewards from normally pleasurable activities—a symptom known as anhedonia—leading to long-term apathy and hopelessness. Studies in animals suggest hyperactivity in the LHb contributes to depression, but the details have been murky.

Both new studies probe the workings of the LHb, a small, central brain region wedged between the stalk of the pineal gland and the thalamus that acts like the dark twin of the brain’s reward centres by processing unexpectedly unpleasant events. For example, if an animal has been trained to expect food when reaching the end of a maze and the reward is not there, the LHb activates, signalling a discrepancy between expectation and outcome. This has led to the LHb being dubbed the key part of a “disappointment circuit.” If the LHb is overactive, it could suppress rewards from normally pleasurable activities—a symptom known as anhedonia—leading to long-term apathy and hopelessness. Studies in animals suggest hyperactivity in the LHb contributes to depression, but the details have been murky.

A study by Niels Birbaumer and his team at the University of Tübingen put individuals with social anxiety disorder and criminal psychopaths through an MRI scanner. In those with social anxiety, they found the neural signature of a hair-trigger social smoke alarm: an overactive frontolimbic circuit. In psychopaths, they found the exact opposite: an underactive frontolimbic circuit. Additional studies have strengthened the idea that psychopathy and social anxiety lie at opposite ends of the spectrum.

A study by Niels Birbaumer and his team at the University of Tübingen put individuals with social anxiety disorder and criminal psychopaths through an MRI scanner. In those with social anxiety, they found the neural signature of a hair-trigger social smoke alarm: an overactive frontolimbic circuit. In psychopaths, they found the exact opposite: an underactive frontolimbic circuit. Additional studies have strengthened the idea that psychopathy and social anxiety lie at opposite ends of the spectrum.

The survey covered mood disorders (depression, dysthymia and bipolar), anxiety disorders (generalised, social and obsessive-compulsive), attention-deficit hyperactivity disorder and autism. It also covered environmental allergies, asthma and autoimmune disorders. Respondents were asked to report whether they had ever been formally diagnosed with each disorder or suspected they suffered from it. With a return rate of nearly 75 percent, Karpinski and her colleagues compared the percentage of the 3,715 respondents who reported each disorder to the national average.

The survey covered mood disorders (depression, dysthymia and bipolar), anxiety disorders (generalised, social and obsessive-compulsive), attention-deficit hyperactivity disorder and autism. It also covered environmental allergies, asthma and autoimmune disorders. Respondents were asked to report whether they had ever been formally diagnosed with each disorder or suspected they suffered from it. With a return rate of nearly 75 percent, Karpinski and her colleagues compared the percentage of the 3,715 respondents who reported each disorder to the national average. 1 percent).

1 percent). All the same, Karpinski and her colleagues’ findings set the stage for research that promises to shed new light on the link between intelligence and health. One possibility is that associations between intelligence and health outcomes reflect pleiotropy, which occurs when a gene influences seemingly unrelated traits. There is already some evidence to suggest that this is the case. In a 2015 study, Rosalind Arden and her colleagues concluded that the association between IQ and longevity is mostly explained by genetic factors.

All the same, Karpinski and her colleagues’ findings set the stage for research that promises to shed new light on the link between intelligence and health. One possibility is that associations between intelligence and health outcomes reflect pleiotropy, which occurs when a gene influences seemingly unrelated traits. There is already some evidence to suggest that this is the case. In a 2015 study, Rosalind Arden and her colleagues concluded that the association between IQ and longevity is mostly explained by genetic factors.

ing enough sleep can lead to car accidents, medical errors or other mistakes on the job. To encourage better sleep, the medical community encourages adults to engage in good “sleep hygiene” such as limiting or avoiding caffeine and nicotine, avoiding naps during the day, turning off electronics an hour before bed, exercising and practicing relaxation before bedtime. It is also well-known that mental health is closely linked to sleep; insomnia is more common in people suffering from depression or anxiety.

ing enough sleep can lead to car accidents, medical errors or other mistakes on the job. To encourage better sleep, the medical community encourages adults to engage in good “sleep hygiene” such as limiting or avoiding caffeine and nicotine, avoiding naps during the day, turning off electronics an hour before bed, exercising and practicing relaxation before bedtime. It is also well-known that mental health is closely linked to sleep; insomnia is more common in people suffering from depression or anxiety. It is important to emphasise that this study only looked at the association between a sense of purpose and better sleep—the findings cannot say for sure that having a greater sense of purpose causes one to sleep better. An alternative interpretation for the findings is that people who have a greater sense of purpose also tend to have better physical and mental health, which in turn explains their higher quality sleep. Another important limitation of the study is that the findings rely entirely on people’s self-reported sleep symptoms. The researchers did not bring participants into a lab and actually monitor the quality of their sleep. Therefore, it is possible that people with a higher sense of purpose simply remember getting better sleep compared to people who do not report experiencing a sense of purpose in life.

It is important to emphasise that this study only looked at the association between a sense of purpose and better sleep—the findings cannot say for sure that having a greater sense of purpose causes one to sleep better. An alternative interpretation for the findings is that people who have a greater sense of purpose also tend to have better physical and mental health, which in turn explains their higher quality sleep. Another important limitation of the study is that the findings rely entirely on people’s self-reported sleep symptoms. The researchers did not bring participants into a lab and actually monitor the quality of their sleep. Therefore, it is possible that people with a higher sense of purpose simply remember getting better sleep compared to people who do not report experiencing a sense of purpose in life. Developing a sense of purpose in life may simultaneously convey other benefits in addition to better sleep. Research has linked experiencing purpose in life to a variety of other positive outcomes including better brain functioning, reduced risk of heart attack and even a higher income. People with a greater sense of purpose in their life would surely be better off while also serving as a positive example in the lives of those they know.

Developing a sense of purpose in life may simultaneously convey other benefits in addition to better sleep. Research has linked experiencing purpose in life to a variety of other positive outcomes including better brain functioning, reduced risk of heart attack and even a higher income. People with a greater sense of purpose in their life would surely be better off while also serving as a positive example in the lives of those they know.

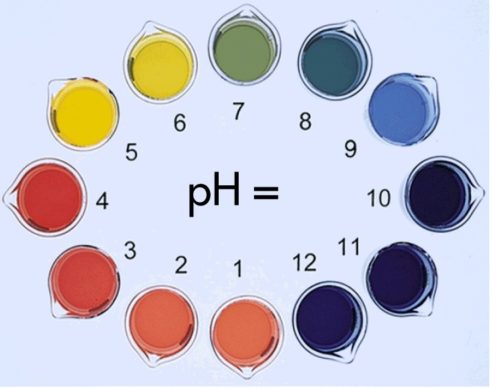

The human brain frequently undergoes changes in acidity, with spikes from time to time. One main cause of these temporary surges is carbon dioxide gas, which is constantly released as the brain breaks down sugar to generate energy. Yet the overall chemistry in a healthy brain remains relatively neutral because processes such as respiration—which expels carbon dioxide—help to maintain the status quo. As a result, fleeting acid-base fluctuations usually go unnoticed.

The human brain frequently undergoes changes in acidity, with spikes from time to time. One main cause of these temporary surges is carbon dioxide gas, which is constantly released as the brain breaks down sugar to generate energy. Yet the overall chemistry in a healthy brain remains relatively neutral because processes such as respiration—which expels carbon dioxide—help to maintain the status quo. As a result, fleeting acid-base fluctuations usually go unnoticed.

In the 1960s, a surgical technique to reduce stomach size (called bariatric surgery) was introduced to help obese patients lose weight. Doctors considered this primarily a mechanical fix. A smaller stomach, the reasoning went, simply cannot hold and process as much food. Patients get full faster, eat less and therefore lose weight.

In the 1960s, a surgical technique to reduce stomach size (called bariatric surgery) was introduced to help obese patients lose weight. Doctors considered this primarily a mechanical fix. A smaller stomach, the reasoning went, simply cannot hold and process as much food. Patients get full faster, eat less and therefore lose weight.

Then there is the hedonistic thrill of sitting down to a meal. Eating also lights up our reward circuitry, pushing us to eat for pleasure independent of energy needs. It is this arm of the gut-brain axis that many scientists feel contributes to obesity.

Then there is the hedonistic thrill of sitting down to a meal. Eating also lights up our reward circuitry, pushing us to eat for pleasure independent of energy needs. It is this arm of the gut-brain axis that many scientists feel contributes to obesity. of controlling living tissue using light—to activate or silence specific neurons in the brain stem parabrachial nucleus pathway in mice. He found that engaging this circuit strongly reduced food intake. But deactivating it left the brain insensitive to the cocktail of hormones that typically signaled satiety—such that mice would keep eating.

of controlling living tissue using light—to activate or silence specific neurons in the brain stem parabrachial nucleus pathway in mice. He found that engaging this circuit strongly reduced food intake. But deactivating it left the brain insensitive to the cocktail of hormones that typically signaled satiety—such that mice would keep eating. A similar study, published in 2015 by biologist Fredrik Bäckhed of the University of Gothenburg in Sweden, found that two types of bariatric surgery—the Rouxen-Y gastric bypass and vertical banded gastroplasty—resulted in enduring changes in the human gut microbiota. These changes could be explained by multiple factors, including altered dietary patterns after surgery; acidity levels in the gastrointestinal tract; and the fact that the bypass procedure causes undigested food and bile (the swamp-green digestive fluid secreted by the liver) to enter the gut farther down the intestines.

A similar study, published in 2015 by biologist Fredrik Bäckhed of the University of Gothenburg in Sweden, found that two types of bariatric surgery—the Rouxen-Y gastric bypass and vertical banded gastroplasty—resulted in enduring changes in the human gut microbiota. These changes could be explained by multiple factors, including altered dietary patterns after surgery; acidity levels in the gastrointestinal tract; and the fact that the bypass procedure causes undigested food and bile (the swamp-green digestive fluid secreted by the liver) to enter the gut farther down the intestines. The Mayo Clinic believes that in the future the best approach to treating obesity will be highly personalised. They consider obesity to be a disease of the gut-brain axis in which the part of the axis which is abnormal needs to be identified in each patient in order to personalise treatment.

The Mayo Clinic believes that in the future the best approach to treating obesity will be highly personalised. They consider obesity to be a disease of the gut-brain axis in which the part of the axis which is abnormal needs to be identified in each patient in order to personalise treatment.

n which well-meaning doctors have played a part. Due to chronic pain health issues the sales of opioid drug painkillers on prescription has quadrupled between 1999 and 2014. In 2012 alone, doctors issued 259 million opioid prescriptions – enough to give a bottle of pills to every adult in the entire United States. And in 2015 more than half of all overdose deaths in the USA involved opioids – either pain medications (such as OxyContin and Vicodin) – or illicit substances, such as opium and heroin. To put that statistic in perspective, opioids claimed roughly as many lives that year as car crashes.

n which well-meaning doctors have played a part. Due to chronic pain health issues the sales of opioid drug painkillers on prescription has quadrupled between 1999 and 2014. In 2012 alone, doctors issued 259 million opioid prescriptions – enough to give a bottle of pills to every adult in the entire United States. And in 2015 more than half of all overdose deaths in the USA involved opioids – either pain medications (such as OxyContin and Vicodin) – or illicit substances, such as opium and heroin. To put that statistic in perspective, opioids claimed roughly as many lives that year as car crashes. No matter how chronic pain starts, it often increases and spreads, leaving many people reaching or more pills. Unfortunately, higher doses of opioid drugs do not guarantee relief—and can actually make matters worse. For starters, patients build tolerance to these medications, so that over time, it takes more opioids to blunt the same levels of pain. Higher doses increase the risk of dangerous side effects, including addiction, coma and death. And recent research shows that even relatively low doses of opioids can also cause hyperalgesia, or an increased sensitivity to pain: sometimes these drugs intensify the very pain they are meant to suppress.

No matter how chronic pain starts, it often increases and spreads, leaving many people reaching or more pills. Unfortunately, higher doses of opioid drugs do not guarantee relief—and can actually make matters worse. For starters, patients build tolerance to these medications, so that over time, it takes more opioids to blunt the same levels of pain. Higher doses increase the risk of dangerous side effects, including addiction, coma and death. And recent research shows that even relatively low doses of opioids can also cause hyperalgesia, or an increased sensitivity to pain: sometimes these drugs intensify the very pain they are meant to suppress. Many experts now view chronic pain as a disease in its own right. Over time it engages and changes patterns of activity in brain areas associated not only with physical sensations but with sleep, thought and emotion. No wonder that studies show that chronic pain is associated with higher rates of mortality, sleep disorders, depression and anxiety.

Many experts now view chronic pain as a disease in its own right. Over time it engages and changes patterns of activity in brain areas associated not only with physical sensations but with sleep, thought and emotion. No wonder that studies show that chronic pain is associated with higher rates of mortality, sleep disorders, depression and anxiety. ddhist meditation practices. Jon Kabat-Zinn, now a professor of medicine emeritus at the University of Massachusetts Medical School, developed MBSR in the 1970s. Since then, MBSR classes are available in more than 30 countries. A growing body of evidence suggests that MBSR—which encourages nonjudgmental awareness of the present moment and fosters greater mind-body awareness—can mitigate a variety of ailments, from cancer and depression to drug addiction and chronic pain.

ddhist meditation practices. Jon Kabat-Zinn, now a professor of medicine emeritus at the University of Massachusetts Medical School, developed MBSR in the 1970s. Since then, MBSR classes are available in more than 30 countries. A growing body of evidence suggests that MBSR—which encourages nonjudgmental awareness of the present moment and fosters greater mind-body awareness—can mitigate a variety of ailments, from cancer and depression to drug addiction and chronic pain. That finding reinforces the idea that when it comes to pain, simply being under the care of a receptive health care professional can be palliative. Researchers are investigating how all these complementary treatments work. Thankfully they don’t seem to be waiting for basic science to tell them the optimal way to treat pain. There is broad agreement that mindfulness, yoga, biofeedback and acupuncture may succeed by changing patients’ relationship to their pain rather than actually lowering the intensity of the physical sensation. If patients are suffering then it would seem logical (and human) to find what really works from the various diverging modalities available.

That finding reinforces the idea that when it comes to pain, simply being under the care of a receptive health care professional can be palliative. Researchers are investigating how all these complementary treatments work. Thankfully they don’t seem to be waiting for basic science to tell them the optimal way to treat pain. There is broad agreement that mindfulness, yoga, biofeedback and acupuncture may succeed by changing patients’ relationship to their pain rather than actually lowering the intensity of the physical sensation. If patients are suffering then it would seem logical (and human) to find what really works from the various diverging modalities available.

Evolutionary neuropsychologist Jaak Panksepp has shown that the brains of all animals contain the neural circuitry engaged in human laughter. These areas include emotional and memory centres, such as the amygdala and hippocampus. Laughter seems to bubble up from below the surface of the cortex as an involuntary response while activating the pleasure systems in the brain. Famously, Panksepp has even documented (by using technologies that allow humans to hear very high frequencies) that rats emit a rhythmic chirping sound when “tickled.”

Evolutionary neuropsychologist Jaak Panksepp has shown that the brains of all animals contain the neural circuitry engaged in human laughter. These areas include emotional and memory centres, such as the amygdala and hippocampus. Laughter seems to bubble up from below the surface of the cortex as an involuntary response while activating the pleasure systems in the brain. Famously, Panksepp has even documented (by using technologies that allow humans to hear very high frequencies) that rats emit a rhythmic chirping sound when “tickled.” Stand-up comedians often exploit expectations to make audiences laugh. They build suspense and push the boundaries of norms and acceptability to provoke our laughter, whether with puns, jokes or witty retorts. For something to be funny, the person telling a joke and the person hearing it need some common knowledge. Humour therefore requires at least some rudimentary understanding of the physical and social world. This understanding can be based on experience and observation, which provide the foundation for what is “ordinary.” With that baseline, we can differentiate the ordinary from the absurd.

Stand-up comedians often exploit expectations to make audiences laugh. They build suspense and push the boundaries of norms and acceptability to provoke our laughter, whether with puns, jokes or witty retorts. For something to be funny, the person telling a joke and the person hearing it need some common knowledge. Humour therefore requires at least some rudimentary understanding of the physical and social world. This understanding can be based on experience and observation, which provide the foundation for what is “ordinary.” With that baseline, we can differentiate the ordinary from the absurd. Most important, infants create these novel interactions. They decide when and with whom to employ these techniques. As such, these types of playful, teasing exchanges can give us a window into infants’ awareness. Teasing in particular requires at least a rudimentary understanding of others’ minds, a desire to engage, and a guess or prediction as to how to provoke the mind of someone else. To trick someone else means to know that someone else can, in fact, be tricked. This knowledge, referred to as a theory of mind, is a mature insight that has traditionally been credited only to children who are at least four years old. Although infants do not have the mind theory sophistication of older children, their ability to effectively tease and provoke others suggests they have at least some level of awareness.

Most important, infants create these novel interactions. They decide when and with whom to employ these techniques. As such, these types of playful, teasing exchanges can give us a window into infants’ awareness. Teasing in particular requires at least a rudimentary understanding of others’ minds, a desire to engage, and a guess or prediction as to how to provoke the mind of someone else. To trick someone else means to know that someone else can, in fact, be tricked. This knowledge, referred to as a theory of mind, is a mature insight that has traditionally been credited only to children who are at least four years old. Although infants do not have the mind theory sophistication of older children, their ability to effectively tease and provoke others suggests they have at least some level of awareness.