The Self-Compassion Solution – Love Yourself, Too

DOWNLOAD THE ENTIRE MAY 2017 NEWSLETTER including this month’s Freebie.

Self-compassion, at its most basic level, means treating yourself with the same kindness and understanding that you would a friend. People who struggle with this concept, research shows, do not necessarily lack compassion toward others. Rather they hold themselves to higher standards than they would expect of anyone else. Developing self-compassion allows them to recognise and accept their own feelings rather than constantly challenging themselves to “do better.”

A growing number of people are discovering that practicing self-compassion can be a surprisingly effective alternative to the crippling yet common habit of shame-laden self-criticism. Since the birth of self-compassion as a scientific construct—with the publication of a seminal paper by psychologist Kristin Neff of the University of Texas at Austin in 2003—the volume of academic publications investigating self-compassion has snowballed.

In the past few years self-compassion has gone mainstream, as some of its researchers and practitioners— including Neff— have written books and created workshops to popularise the concept. Untold numbers of life coaches, mindfulness teachers and psychotherapists now tout the benefits of self-compassion. Psychotherapists see it as a natural component of well-studied therapies that focus on accepting and gradually changing unhelpful thoughts or behaviour patterns, such as cognitive-behavioural therapy and acceptance and commitment therapy.

Yet many people resist self-compassion, fretting that being compassionate toward ourselves will make us egocentric, self-indulgent or weak. If we are easy on ourselves after a setback, we wonder, will we turn soft and complacent? This question is one of many self-compassion research has tried to answer. The conclusion: a resounding “no.” As mounting evidence shows, self-compassion is typically a source of both personal and interpersonal strength, making self-compassionate individuals more emotionally stable, more motivated to improve themselves and generally better to be with.

Buddhist Roots

Neff, the pioneer in the scientific study of self-compassion, became interested in the topic in the 1990s. As a Ph.D. candidate struggling with the breakup of her first marriage, she was full of shame and self-loathing. She began attending meditation classes and exploring Buddhist thought.

Neff knew that compassion entails concern with another’s pain and a desire to alleviate that person’s suffering, but she had never thought about directing that energy toward herself until she read Buddhist teacher Sharon Salzberg’s book Lovingkindness. She felt transformed by its message that showing kindness to oneself is essential for showing genuine love toward others. She soon began to lay the groundwork to study self-compassion scientifically.

Through her reading, Neff discerned three indispensable elements of self-compassion: kindness toward yourself in difficult times, paying attention to your suffering in a mindful, non-obsessive way, and common humanity, or the recognition that your suffering is part of the human experience rather than unique to you. These three components (along with their opposites) became the basis of the questions Neff used to develop a self-compassion, an instrument she published in 2003 in the Journal Self and Identity that is now widely used by other researchers in assessing a person’s level of this trait.

Using this scale, Neff has shown that self-compassion correlates with important real-world outcomes. In particular, she found that people who score high in self-compassion are less prone to anxiety and depression.

Psychologist Juliana Breines first encountered Neff’s work while she was an undergraduate at the University of Michigan. Breines suspected self-compassion could help people get off the roller coaster of “contingent self-esteem”—that is, the problem of tying your evaluation of yourself to fluctuating factors such as academic achievement and others’ approval. Many studies have demonstrated that this kind of thinking is not conducive to mental health or learning. But Breines worried self- compassion might also undermine motivation. As she puts it, “Self-compassion might be comforting, but does it let you off the hook too easily?”

Breines tested this question a few years later, as a Ph.D student at the University of California, Berkeley. In one of a series of experiments, she and her colleagues had 86 undergraduates take a tough vocabulary quiz. To see the effect of self-compassion on study behaviour, they told one group that it was common to find the test difficult and urged subjects not to be too hard on themselves. A second group got a self-esteem message instead: “Try not to feel bad about yourself—you must be intelligent if you got into Berkeley.” A third group received no additional statements.

Then the researchers measured how long the undergrads would study for a second, similar test. As they reported in 2012 in Personality and Social Psychology Bulletin, the self-compassion group went on to spend 33 percent more time studying for the subsequent quiz than the self-esteem group and 51 percent longer than the neutral control group— a sign that self-compassion bolsters motivation. Being kind to yourself can make it safe to fail, which encourages you to try again.

In a pair of 2012 studies led by social psychologist Ashley Batts Allen, researchers investigating self-compassion in older adults found both psychological and practical benefits. In the first study, with 132 participants ranging from 67 to 90 years old, they found that people who were strongly self-compassionate reported a greater sense of well-being even when they were in poor health. In the second study, involving 71 seniors, self-compassion predicted how likely they were to be willing to use a walker if necessary. The self- compassionate people were just less bothered by the fact that they needed help. If you are low in self-compassion, you’re using too much emotional energy thinking about the bad feelings and not enough addressing the real issues. For example, denying one problem—insisting on not using a walker—can create further difficulties, such as a hip fracture. The mindfulness component of high self-compassion, in contrast, leads people to acknowledge and accept reality, without an emotional judgment. The common-humanity component helps, too, by, for example, allowing one to recognise that everyone has physical limitations with age.

In a pair of 2012 studies led by social psychologist Ashley Batts Allen, researchers investigating self-compassion in older adults found both psychological and practical benefits. In the first study, with 132 participants ranging from 67 to 90 years old, they found that people who were strongly self-compassionate reported a greater sense of well-being even when they were in poor health. In the second study, involving 71 seniors, self-compassion predicted how likely they were to be willing to use a walker if necessary. The self- compassionate people were just less bothered by the fact that they needed help. If you are low in self-compassion, you’re using too much emotional energy thinking about the bad feelings and not enough addressing the real issues. For example, denying one problem—insisting on not using a walker—can create further difficulties, such as a hip fracture. The mindfulness component of high self-compassion, in contrast, leads people to acknowledge and accept reality, without an emotional judgment. The common-humanity component helps, too, by, for example, allowing one to recognise that everyone has physical limitations with age.

In 2014 Leary and his colleagues studied 187 mainly African-American people living with HIV. Patients who were higher in self-compassion showed healthier reactions to life with the potentially deadly virus: they experienced less stress, felt less shame about their condition, and were more likely to express a willingness to disclose their HIV status and to adhere to medical treatment. And a 2015 meta-analysis of 15 studies with a total of 3,252 participants, published in Health Psychology, found links between self-compassion and health-promoting behaviours related to eating, exercise, sleep and stress management.

Bouncing Back to Normal

Research indicates that the self-compassionate are more psychologically resilient and better able to regain emotional well-being after adversity. People who used self-compassionate language after their divorce, for example, recovered more quickly than those who had a more self-critical or self-pitying (“Why me?”) outlook on the relationship’s failure, according to a 2012 study of 109 adults.

Caregivers, too, can benefit. Raising an autistic child, for instance, is more emotionally difficult than other forms of parenting, with levels of stress and hopelessness that tend to correspond to the severity of the child’s symptoms. Yet a 2015 study of 51 parents of autistic children found that those mothers’ and fathers’ self-compassion was more important than the severity of the child’s symptoms in predicting a caregiver’s well-being.

Yet another example comes from 115 combat veterans of the wars in Iraq and Afghanistan. In a 2015 study in the Journal of Traumatic Stress, self-compassionate war veterans experienced much less severe post-traumatic stress disorder (PTSD) symptoms than those lower in self-compassion, even after accounting for the level of combat exposure. It is a powerful testament to the idea that it’s not what you face in life, it is how you relate to yourself when you face very hard times.

Recent studies of people with other psychiatric disorders, including binge eating and borderline personality disorder, suggest that self-compassion helps recovery. Allison Kelly, a psychologist at the University of Waterloo in Ontario who has studied the effect of a self-compassion intervention on binge-eating disorder, points out that recovery requires not only learning to tolerate urges to binge but also figuring out how to bounce back after giving in to those urges. If, like a drill-sergeant coach or critical teacher, you’re threatening yourself into change and beating yourself up whenever you slip up, it makes it hard to feel calm and confident. It often takes away the ability to reflect and learn from what you’re going through.

Self-compassion might seem to go hand in hand with self-esteem. In fact, self-compassion can coexist with low self-esteem and can buffer against it. In a 2015 longitudinal study led by Sarah Marshall, a psychologist at Australian Catholic University, researchers tracked a group of 2,448 students as they moved from ninth to 10th grade. Marshall found that high self-esteem was a precursor to good mental health, regardless of the students’ level of self-compassion. But self- compassionate kids who had low self-esteem also showed good mental health.

That news is good because it is usually easier to raise someone’s self-compassion than his or her self-esteem. It is hard to get someone with low self-esteem to like themselves until they develop more social skills or get a better job or whatever. By comparison, the bad habits of low self-compassion, such as denying a problem exists or beating yourself up, are easier to break.

Stronger Relationships

Recent research suggests that self-compassion is also good for relationships. Neff led a 2013 study of 104 couples that looked at how self-compassionate people treat their romantic partner—as rated by that partner. In general, men and women who scored high in self-compassion were seen as more caring and supportive (and less controlling and verbally aggressive) than individuals low in self-compassion.

Yet Neff has also found that most people have an easier time being compassionate to others than to themselves. A striking illustration is another 2013 study in which she measured both self-compassion and self-reported compassion for others among 384 college students. Neff found absolutely no correlation between the two forms of compassion; similar studies of practicing meditators and of ordinary adults showed only weak correlations. She has also noticed that practitioners of Buddhist metta, or loving-kindness, meditation—in which you start by wishing yourself well and go on to extend your goodwill toward an increasingly widening circle of empathy— give short shrift to the beginning section. Instead they focus on kindness to others.

But if people find it easier to show compassion to others than to themselves, how can we understand the results from the couples study? Neff believes that being kinder to others than to yourself, though possible, will not carry people through long-term relationships.

But if people find it easier to show compassion to others than to themselves, how can we understand the results from the couples study? Neff believes that being kinder to others than to yourself, though possible, will not carry people through long-term relationships.

This interpretation dovetails with findings, published in 2013 in Self and Identity, that revealed how self-compassionate people handle interpersonal conflicts. The study, led by applied statistician Lisa Yarnell, involved 506 undergraduates. Yarnell, now at the American Institutes for Research, found that students high in self-compassion were better at balancing the needs of themselves and of others and felt better about a conflict’s resolution than those low in self-compassion. The self-compassionate individuals reported lower levels of emotional turmoil and greater relational well-being.

These findings have implications for full-time caregivers, who have long been known to be at risk for burnout and “compassion fatigue,” a deadening of compassion through overuse. In fact, a 2016 cross-sectional survey study of 280 registered nurses in Portugal suggested that although nurses with higher levels of empathy were at greater risk of compassion fatigue, empathy was not a risk factor if it was accompanied by self-compassion.

Teaching Self-Compassion

If being self-compassionate has so many positive outcomes, can people learn to treat themselves more kindly?

If being self-compassionate has so many positive outcomes, can people learn to treat themselves more kindly?

One promising intervention is mindful self-compassion, or MSC, an eight-week workshop that Neff developed with Christopher Germer, a clinical psychologist who teaches part-time at Harvard Medical School. The MSC program, designed for the general public, explains the research on self-compassion and introduces a variety of exercises, such as savouring pleasant experiences, touching yourself soothingly, using a warm and gentle voice, and writing a letter to yourself from a loving imaginary friend.

In a small study published in 2013, Neff and Germer reported that 25 people (mainly middle-aged women) who completed an MSC workshop reported higher gains in self-compassion and well-being than a similar group randomly assigned to the wait list for the workshop. Furthermore, the workshop participants maintained their gains a year later. Interestingly, people in the control group also showed some gains in self-compassion—the control group’s self-compassion scores rose 6.5 percent between the pre-test and the post-test phases, whereas the experimental group’s self-compassion scores rose 42.6 percent. This result initially puzzled the researchers—until they discovered that the wait-listed group used the time to learn about self-compassion independently through books and Web sites.

It remains unclear how much the MSC participants’ success is related to the training itself as opposed to, say, being in a group or having caring teachers, notes Julieta Galante, a research associate in psychiatry at the University of Cam- bridge. Last year Galante and her colleagues published the results of an online, four-week randomised controlled study of only the loving-kindness meditation— an exercise often used to cultivate compassion for yourself and others but not targeted specifically to relieve suffering. The team found no difference between the meditation group and a control group doing light physical exercise.

Furthermore, many people dropped out of the intervention, some actually describing intense, troubling emotions—crying uncontrollably or realising they had no uncomplicated relationships in their lives. Germer and Neff brace their workshop participants for this possibility, using the firefighting metaphor of “back draft” to explain the phenomenon: just as flames rush out of a room as oxygen returns, old pain can surface amid an influx of compassion in people starved of love. It is possible that before taking a course, some individuals may need to ease into self-compassion practice slowly, perhaps with the aid of a therapist.

Furthermore, many people dropped out of the intervention, some actually describing intense, troubling emotions—crying uncontrollably or realising they had no uncomplicated relationships in their lives. Germer and Neff brace their workshop participants for this possibility, using the firefighting metaphor of “back draft” to explain the phenomenon: just as flames rush out of a room as oxygen returns, old pain can surface amid an influx of compassion in people starved of love. It is possible that before taking a course, some individuals may need to ease into self-compassion practice slowly, perhaps with the aid of a therapist.

Paul Gilbert, a professor of clinical psychology at the University of Derby in England, agrees. In his years of treating victims of childhood abuse or neglect, he has observed that kindness can back-fire. Anything that stimulates fragile attachment systems can trigger memories of past trauma, particularly in cases of childhood abuse. There are so many fears and resistances to compassion that it “would just blow fuses to start with exercises for the general public,” Gilbert says.

The compassion-focused therapy (CFT) that he developed for such patients and tested through small-scale studies starts with psycho-education and proceeds gradually. Gilbert explains to patients, for example, that self-criticism is not their fault and shows how it may have developed as a way to protect themselves from threatening parents. Once patients understand that neither their genes nor their early environment are their fault, they can begin to let go of shame—and start taking responsibility for their future.

REFERENCES

The Compassionate Mind: A New Approach to Life’s Challenges. Paul Gilbert. New Harbinger Publications, 2009.

Self-Compassion: The Proven Power of Being Kind to Yourself. Kristin Neff. William Morrow, 2011.

Self-Criticism and Self-Compassion: Risk and Resilience. Ricks Warren, Elke Smeets and Kristin Neff in Current Psychiatry, Vol. 15, No. 12, pages 18–21, 24–28 and 32; December 2016.

Metta Institute describes “Metta Meditation”:

A few years later a friend told Carolyn about a depression-prevention study at the University of Pittsburgh. She signed up immediately. All 247 participants were, like her, older adults with mild depressive symptoms— people who without treatment face a 20 to 25 percent chance of succumbing to major depression. Half received about five hours of problem-solving therapy, a cognitive-behavioral approach designed to help patients cope with stressful life experiences. The rest, including Carolyn, received dietary counseling. Guided by a social worker, she discovered that she liked salmon, tuna and a number of other “brain-healthy” foods—which quickly replaced all the criaps, cake and sweets she was eating.

A few years later a friend told Carolyn about a depression-prevention study at the University of Pittsburgh. She signed up immediately. All 247 participants were, like her, older adults with mild depressive symptoms— people who without treatment face a 20 to 25 percent chance of succumbing to major depression. Half received about five hours of problem-solving therapy, a cognitive-behavioral approach designed to help patients cope with stressful life experiences. The rest, including Carolyn, received dietary counseling. Guided by a social worker, she discovered that she liked salmon, tuna and a number of other “brain-healthy” foods—which quickly replaced all the criaps, cake and sweets she was eating. Sanchez-Villegas later confirmed the association in another large trial. The PREDIMED (Prevention with Mediterranean Diet) study—a multicentre research project evaluating nearly 7,500 men and women across Spain—initially looked at whether a Mediterranean diet, supplemented with extra nuts, protects against cardiovascular disease. It does. But in 2013 Sánchez-Villegas and other investigators also analysed depression data among PREDIMED’s participants. Again, compared with subjects who ate a generic low-fat diet, those who adhered to the nut-enriched Mediterranean diet had a lower risk for depression. This was especially true among people with diabetes, who saw a 40 percent drop in risk. Perhaps these patients, who cannot adequately process glucose, benefited the most because the Mediterranean diet minimised their sugar intake.

Sanchez-Villegas later confirmed the association in another large trial. The PREDIMED (Prevention with Mediterranean Diet) study—a multicentre research project evaluating nearly 7,500 men and women across Spain—initially looked at whether a Mediterranean diet, supplemented with extra nuts, protects against cardiovascular disease. It does. But in 2013 Sánchez-Villegas and other investigators also analysed depression data among PREDIMED’s participants. Again, compared with subjects who ate a generic low-fat diet, those who adhered to the nut-enriched Mediterranean diet had a lower risk for depression. This was especially true among people with diabetes, who saw a 40 percent drop in risk. Perhaps these patients, who cannot adequately process glucose, benefited the most because the Mediterranean diet minimised their sugar intake. Two meta-analyses from 2010 and 2012 collectively reviewed data from 53 studies and reported significantly elevated levels of several blood markers of inflammation in depressed patients. And numerous studies have reported increased or altered activity of immune cells called microglia—which play a key role in the brain’s inflammatory response—in patients with psychiatric disorders, including depression and schizophrenia. It is not clear whether inflammation causes mental illness in some cases, or vice versa. But the evidence suggests that many if not most known risk factors for psychiatric disorders, especially depression, promote inflammation; these include abuse, stress, grief and certain genetic predilections.

Two meta-analyses from 2010 and 2012 collectively reviewed data from 53 studies and reported significantly elevated levels of several blood markers of inflammation in depressed patients. And numerous studies have reported increased or altered activity of immune cells called microglia—which play a key role in the brain’s inflammatory response—in patients with psychiatric disorders, including depression and schizophrenia. It is not clear whether inflammation causes mental illness in some cases, or vice versa. But the evidence suggests that many if not most known risk factors for psychiatric disorders, especially depression, promote inflammation; these include abuse, stress, grief and certain genetic predilections. Our evolutionary backstory could explain these neuro-protective effects. Sometime between 195,000 and 125,000 years ago, humans may have nearly gone extinct. A glacial period had set in that probably left much of the earth icy and barren for 70,000 years. The population of our hominin ancestors plummeted to possibly only a few hundred in number, and most experts agree that everyone alive today is descended from this group. Exactly how they—or early modern humans, for that matter—managed to stay alive during recurring glacial periods is less clear. But as terrestrial resources dried up, foraging for marine life in reliable shellfish beds surrounding Africa most likely became essential for survival. Graduate student Jan De Vynck of Nelson Mandela Metropolitan University in South Africa has shown that one person working those shellfish beds can harvest a staggering 4,500 calories an hour.

Our evolutionary backstory could explain these neuro-protective effects. Sometime between 195,000 and 125,000 years ago, humans may have nearly gone extinct. A glacial period had set in that probably left much of the earth icy and barren for 70,000 years. The population of our hominin ancestors plummeted to possibly only a few hundred in number, and most experts agree that everyone alive today is descended from this group. Exactly how they—or early modern humans, for that matter—managed to stay alive during recurring glacial periods is less clear. But as terrestrial resources dried up, foraging for marine life in reliable shellfish beds surrounding Africa most likely became essential for survival. Graduate student Jan De Vynck of Nelson Mandela Metropolitan University in South Africa has shown that one person working those shellfish beds can harvest a staggering 4,500 calories an hour. In 1972 psychiatrist Michael Crawford, now at Imperial College London, co-published a paper concluding that the brain is dependent on DHA and that DHA sourced from the sea was critical to mammalian brain evolution, especially human brain evolution. For more than 40 years he has argued that the rising rates of brain disorders are a result of post–World War II dietary changes—especially a move toward land-sourced food and, subsequently, the embrace of low-fat diets. He feels that omega-3s from seafood were critical to the human species’ rapid neural march toward higher cognition.

In 1972 psychiatrist Michael Crawford, now at Imperial College London, co-published a paper concluding that the brain is dependent on DHA and that DHA sourced from the sea was critical to mammalian brain evolution, especially human brain evolution. For more than 40 years he has argued that the rising rates of brain disorders are a result of post–World War II dietary changes—especially a move toward land-sourced food and, subsequently, the embrace of low-fat diets. He feels that omega-3s from seafood were critical to the human species’ rapid neural march toward higher cognition. In one striking (if slightly nauseating) experiment in 2014, then 23-year-old student Tom Spector wiped out about a third of the bacterial species in his gut by limiting his diet to McDonald’s fast food. It took only 10 days. Spector played the guinea pig for two reasons: as a project to complete his genetics degree and to provide data for his father, Tim, a genetic epidemiology professor at King’s College London, who studies how processed diets affect gastrointestinal bacteria. The Spector family’s research did not assess specific health consequences—they were measuring only the drop in floral diversity in Tom’s gut—but Tom did report feeling lethargic and down after days of burgers, fries and sugary soda. The decline in species was so drastic that Tim sent the results to three laboratories for confirmation.

In one striking (if slightly nauseating) experiment in 2014, then 23-year-old student Tom Spector wiped out about a third of the bacterial species in his gut by limiting his diet to McDonald’s fast food. It took only 10 days. Spector played the guinea pig for two reasons: as a project to complete his genetics degree and to provide data for his father, Tim, a genetic epidemiology professor at King’s College London, who studies how processed diets affect gastrointestinal bacteria. The Spector family’s research did not assess specific health consequences—they were measuring only the drop in floral diversity in Tom’s gut—but Tom did report feeling lethargic and down after days of burgers, fries and sugary soda. The decline in species was so drastic that Tim sent the results to three laboratories for confirmation. The good news is that dietary changes can not only wreck our microbial diversity, they can boost it, reducing gastrointestinal inflammation in the process. In 2015 a group at the University of Pittsburgh conducted a study in which 20 African-Americans from Pennsylvania swapped diets with 20 rural black South Africans. In place of their usual low-animal-fat, high-fibre diet, the Africans consumed burgers, fries, hash browns, and the like. The Americans eschewed their normal fatty foods and refined carbohydrates for beans, vegetables and fish. After just two weeks the Americans’ colons were less inflamed, and fecal samples showed a 250 percent spike in butyrate-producing bacterial species. Butyrate is thought to reduce the risk of cancer. The South Africans, on the other hand, underwent microbial changes associated with increased cancer risk.

The good news is that dietary changes can not only wreck our microbial diversity, they can boost it, reducing gastrointestinal inflammation in the process. In 2015 a group at the University of Pittsburgh conducted a study in which 20 African-Americans from Pennsylvania swapped diets with 20 rural black South Africans. In place of their usual low-animal-fat, high-fibre diet, the Africans consumed burgers, fries, hash browns, and the like. The Americans eschewed their normal fatty foods and refined carbohydrates for beans, vegetables and fish. After just two weeks the Americans’ colons were less inflamed, and fecal samples showed a 250 percent spike in butyrate-producing bacterial species. Butyrate is thought to reduce the risk of cancer. The South Africans, on the other hand, underwent microbial changes associated with increased cancer risk.

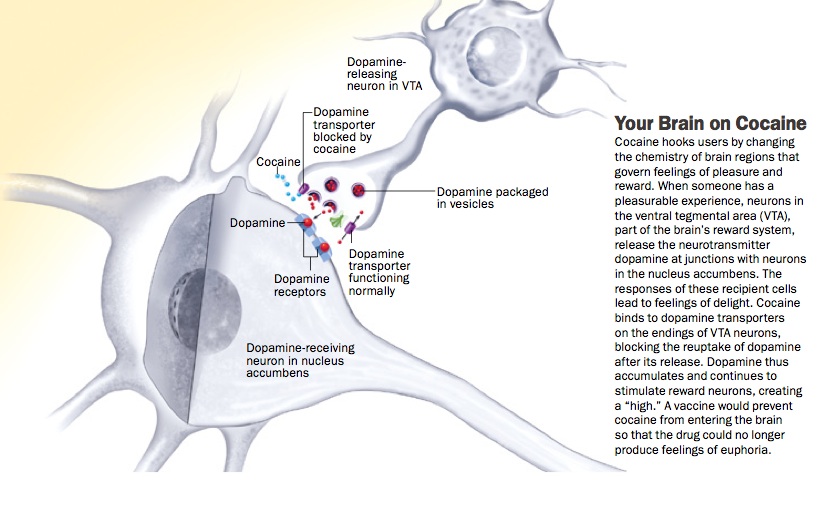

His colleague at the University of Michigan, Terry Robinson, had been using a neurotoxin to destroy dopamine neurons and create rats that modelled severe symptoms of Parkinson’s. Berridge decided to give sweet foods to these rodents and see if they appeared pleased. He expected that their lack of dopamine would deny them this response. Because they were so dopamine-depleted, Robinson’s rats rarely moved if left alone. They did not seek food and had to be fed artificially. Unexpectedly, however, their facial reactions were completely normal—they continued to lick their lips in response to something sweet and grimace at a bitter meal.

His colleague at the University of Michigan, Terry Robinson, had been using a neurotoxin to destroy dopamine neurons and create rats that modelled severe symptoms of Parkinson’s. Berridge decided to give sweet foods to these rodents and see if they appeared pleased. He expected that their lack of dopamine would deny them this response. Because they were so dopamine-depleted, Robinson’s rats rarely moved if left alone. They did not seek food and had to be fed artificially. Unexpectedly, however, their facial reactions were completely normal—they continued to lick their lips in response to something sweet and grimace at a bitter meal. This dissociation fit with studies of Parkinson’s patients. They are still, after all, able to enjoy life’s ups but often have problems with motivation. Perhaps the most vivid example of this occurred in the early 20th century, when an epidemic of encephalitis lethargica left thousands of people with an especially severe parkinsonian condition. Their brains were so depleted of dopamine that they were unable to initiate movement and were essentially “frozen in place” like living statues. (The film Awakenings, which starred Robin Williams as neurologist Oliver Sacks, was based on the doctor’s 1973 memoir of treating such patients.) But a sufficiently strong external stimulus could spark action for people with this condition. In one case cited by Sacks, a man who typically sat motionless in his wheelchair on the beach saw someone drowning. He jumped up, rescued the swimmer and then returned to his prior rigidly fixed position. One of Sacks’s own patients would sit silent and still unless thrown several oranges, which she would then catch and juggle.

This dissociation fit with studies of Parkinson’s patients. They are still, after all, able to enjoy life’s ups but often have problems with motivation. Perhaps the most vivid example of this occurred in the early 20th century, when an epidemic of encephalitis lethargica left thousands of people with an especially severe parkinsonian condition. Their brains were so depleted of dopamine that they were unable to initiate movement and were essentially “frozen in place” like living statues. (The film Awakenings, which starred Robin Williams as neurologist Oliver Sacks, was based on the doctor’s 1973 memoir of treating such patients.) But a sufficiently strong external stimulus could spark action for people with this condition. In one case cited by Sacks, a man who typically sat motionless in his wheelchair on the beach saw someone drowning. He jumped up, rescued the swimmer and then returned to his prior rigidly fixed position. One of Sacks’s own patients would sit silent and still unless thrown several oranges, which she would then catch and juggle.

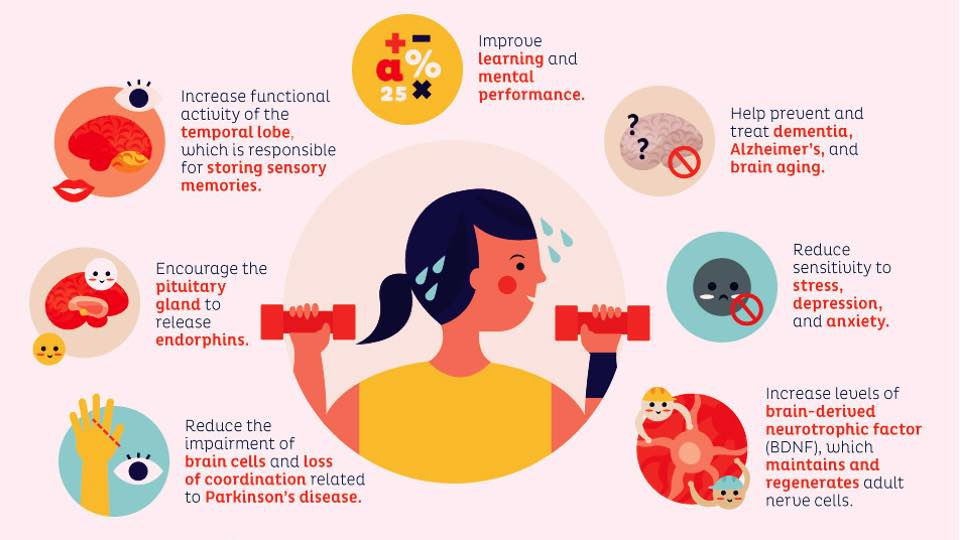

leading causes of disability and death around the globe, according to the World Health Organisation. At any given time, it afflicts around 350 million people worldwide. Only a fraction of sufferers seek help, and of those, only a third respond to standard treatment, which is usually counselling and medication. Antidepressant drugs are often costly and can have serious side effects, driving many patients to search for less expensive, safer, more natural solutions. In a survey of more than 2,000 U.S. adults published in 2001, more than half of the respondents with depression said that they had turned to some kind of alternative treatment, such as yoga, herbal medicines or acupuncture.

leading causes of disability and death around the globe, according to the World Health Organisation. At any given time, it afflicts around 350 million people worldwide. Only a fraction of sufferers seek help, and of those, only a third respond to standard treatment, which is usually counselling and medication. Antidepressant drugs are often costly and can have serious side effects, driving many patients to search for less expensive, safer, more natural solutions. In a survey of more than 2,000 U.S. adults published in 2001, more than half of the respondents with depression said that they had turned to some kind of alternative treatment, such as yoga, herbal medicines or acupuncture. Some researchers have attempted to figure out what types of exercise and intensity levels are most effective as an anti-depressant. In a frequently cited study from 2005, for example, psychiatrist Madhukar Trivedi (University of Texas Southwestern Medical Center) and his colleagues tracked the health of 80 adults with mild to moderate depression for three months as they exercised three to five times a week on a treadmill or stationary bicycle at low intensity (seven kilocalories per kilogram per week) or at a higher intensity, as recommended by public health authorities (17.5 kilocalories per kilogram per week). At the end of the three months, the adults who exercised at the higher intensity had lessened the severity of their depression by 47 percent, compared with only 30 percent for the low-intensity group and 29 percent for a group who engaged in stretching rather than aerobic exercise.

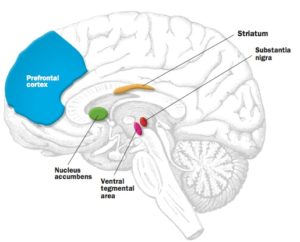

Some researchers have attempted to figure out what types of exercise and intensity levels are most effective as an anti-depressant. In a frequently cited study from 2005, for example, psychiatrist Madhukar Trivedi (University of Texas Southwestern Medical Center) and his colleagues tracked the health of 80 adults with mild to moderate depression for three months as they exercised three to five times a week on a treadmill or stationary bicycle at low intensity (seven kilocalories per kilogram per week) or at a higher intensity, as recommended by public health authorities (17.5 kilocalories per kilogram per week). At the end of the three months, the adults who exercised at the higher intensity had lessened the severity of their depression by 47 percent, compared with only 30 percent for the low-intensity group and 29 percent for a group who engaged in stretching rather than aerobic exercise. Exercise also seems to mimic some of the chemical effects of antidepressant medication. Based on increasing evidence, some scientists argue that certain cases of depression result from the impaired growth of both brain cells and the connections between them. Studies have documented the atrophy and loss of neurons in brain regions such as the amygdala, hippocampus and prefrontal cortex in patients with major depression. Antidepressants that increase levels of serotonin and other neurotransmitters might work by reinvigorating neural proliferation, a process that depends in part on a molecule called brain-derived neurotrophic factor (BDNF). In studies with both animals and people, exercise enhances the production of BDNF.

Exercise also seems to mimic some of the chemical effects of antidepressant medication. Based on increasing evidence, some scientists argue that certain cases of depression result from the impaired growth of both brain cells and the connections between them. Studies have documented the atrophy and loss of neurons in brain regions such as the amygdala, hippocampus and prefrontal cortex in patients with major depression. Antidepressants that increase levels of serotonin and other neurotransmitters might work by reinvigorating neural proliferation, a process that depends in part on a molecule called brain-derived neurotrophic factor (BDNF). In studies with both animals and people, exercise enhances the production of BDNF. Despite the mounting evidence that exercise can remedy some forms of depression, skepticism persists in academia and health care. Trivedi has found that there is a general bias that exercise is not a bona fide treatment—it’s just something you should do in addition to treatment, like trying to sleep and eat well. Even though recognition of exercise as a treatment is increasing, only some health insurance companies pay for gym time, and when they do, they often offer small temporary discounts.

Despite the mounting evidence that exercise can remedy some forms of depression, skepticism persists in academia and health care. Trivedi has found that there is a general bias that exercise is not a bona fide treatment—it’s just something you should do in addition to treatment, like trying to sleep and eat well. Even though recognition of exercise as a treatment is increasing, only some health insurance companies pay for gym time, and when they do, they often offer small temporary discounts.

Studies confirm that many modern employees, are perpetually preoccupied with work: even when they get a break, they feel obligated to keep working. The European Union mandates 20 days of paid holiday, but the U.S. has no federal laws guaranteeing paid time off, sick leave or breaks for national holidays. Canada, Japan and Hong Kong mandate just 10 or fewer days of annual holidays; in the U.S., workers receive an average of just eight days after one year on the job. But a 2014 survey by Harris Interactive found that Americans use only half of their eligible holiday days and paid time off. A 2015 report by Expedia showed that Americans collectively neglect 1.3 million years of vacation annually. And in several surveys, U.S. workers have confessed that they do not fully unplug from phone or email even when they are on holiday or ill. The Americans are not alone when it comes to this.

Studies confirm that many modern employees, are perpetually preoccupied with work: even when they get a break, they feel obligated to keep working. The European Union mandates 20 days of paid holiday, but the U.S. has no federal laws guaranteeing paid time off, sick leave or breaks for national holidays. Canada, Japan and Hong Kong mandate just 10 or fewer days of annual holidays; in the U.S., workers receive an average of just eight days after one year on the job. But a 2014 survey by Harris Interactive found that Americans use only half of their eligible holiday days and paid time off. A 2015 report by Expedia showed that Americans collectively neglect 1.3 million years of vacation annually. And in several surveys, U.S. workers have confessed that they do not fully unplug from phone or email even when they are on holiday or ill. The Americans are not alone when it comes to this. In a survey of more than 300 part- or full-time workers published last year in the Journal of Occupational Health Psychology, Barber and her colleagues found that employees who reported greater workplace telepressure missed more days of work, experienced more physical and mental burnout, and did not sleep as well as their less email-obsessed peers. Barber also found that telepressure can lower the quality of an employee’s work: responsivity doesn’t always mean productivity – all it shows is that someone is responding and available, but that is different from doing good work.

In a survey of more than 300 part- or full-time workers published last year in the Journal of Occupational Health Psychology, Barber and her colleagues found that employees who reported greater workplace telepressure missed more days of work, experienced more physical and mental burnout, and did not sleep as well as their less email-obsessed peers. Barber also found that telepressure can lower the quality of an employee’s work: responsivity doesn’t always mean productivity – all it shows is that someone is responding and available, but that is different from doing good work. Changes to the brain’s structure and to behaviour most likely explain these improvements. Over time expert meditators may develop a more intricately wrinkled cortex—the brain’s outer layer, which is critical for many sophisticated mental abilities, such as abstract thought. These practitioners may also have increased volume and density in the hippocampus, an area that is absolutely crucial for memory. Finally, meditation appears to thicken regions of the frontal cortex that we rely on to regulate our emotions and prevent the typical wilting of brain areas responsible for sustaining attention as we age.

Changes to the brain’s structure and to behaviour most likely explain these improvements. Over time expert meditators may develop a more intricately wrinkled cortex—the brain’s outer layer, which is critical for many sophisticated mental abilities, such as abstract thought. These practitioners may also have increased volume and density in the hippocampus, an area that is absolutely crucial for memory. Finally, meditation appears to thicken regions of the frontal cortex that we rely on to regulate our emotions and prevent the typical wilting of brain areas responsible for sustaining attention as we age.

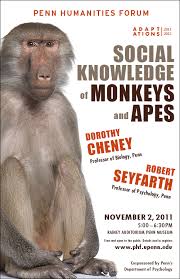

Picture two female chimpanzees hanging out under a tree. One grooms the other, systematically working long fingers through fur, picking out bugs and bits of leaves. The recipient sprawls sleepily on the ground, looking as relaxed as someone enjoying a spa day. A subsequent surreptitious measurement of her levels of oxytocin, a hormone associated with bonding and pleasure, would confirm that she is pretty happy.

Picture two female chimpanzees hanging out under a tree. One grooms the other, systematically working long fingers through fur, picking out bugs and bits of leaves. The recipient sprawls sleepily on the ground, looking as relaxed as someone enjoying a spa day. A subsequent surreptitious measurement of her levels of oxytocin, a hormone associated with bonding and pleasure, would confirm that she is pretty happy. To most of us, the pleasures of friendship are familiar. Like this pair of chimps, we are more likely to relax and enjoy ourselves at dinner with people we know well than with people we have just met. Philosophers have celebrated the joys of social connection since the time of Plato, who wrote a dialogue on the subject, and there has been evidence for decades that social relationships are good for us. But it is only now that friendship is getting serious scientific respect. Researchers from disciplines as diverse as neurobiology, economics and animal behaviour are recognising parallels between the interactions of animals and the habits of people at dinner parties and are asking far more rigorous questions about the motivations behind social behaviour.

To most of us, the pleasures of friendship are familiar. Like this pair of chimps, we are more likely to relax and enjoy ourselves at dinner with people we know well than with people we have just met. Philosophers have celebrated the joys of social connection since the time of Plato, who wrote a dialogue on the subject, and there has been evidence for decades that social relationships are good for us. But it is only now that friendship is getting serious scientific respect. Researchers from disciplines as diverse as neurobiology, economics and animal behaviour are recognising parallels between the interactions of animals and the habits of people at dinner parties and are asking far more rigorous questions about the motivations behind social behaviour. Most critically, friendship is sustained. You might have a pleasant interaction with someone on the subway but would not call that person your friend. But the neighbour with whom you regularly exercise and occasionally dine? That is a friend.

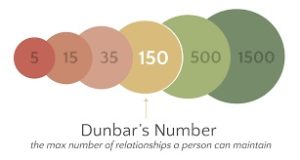

Most critically, friendship is sustained. You might have a pleasant interaction with someone on the subway but would not call that person your friend. But the neighbour with whom you regularly exercise and occasionally dine? That is a friend. Christakis joined forces with James Fowler, a political scientist now at the University of California, San Diego (both were then at Harvard University), to study social networks of 3,000 or 30,000 or more people. Using computational techniques, they and others have established measures of connectedness that allow sophisticated mapping of these bonds. For example, they count how many friends I would name (“out-degree”) and how many friends name me (“in-degree”) separately—thereby dealing with any mismatch in our perceptions of how close we really are. Their 2009 book, Connected: The Surprising Power of Our Social Networks and How They Shape Our Lives, made the case that social connections of up to three degrees of separation have a significant influence on such things as weight as well as on smoking habits, altruism and voting behaviours.

Christakis joined forces with James Fowler, a political scientist now at the University of California, San Diego (both were then at Harvard University), to study social networks of 3,000 or 30,000 or more people. Using computational techniques, they and others have established measures of connectedness that allow sophisticated mapping of these bonds. For example, they count how many friends I would name (“out-degree”) and how many friends name me (“in-degree”) separately—thereby dealing with any mismatch in our perceptions of how close we really are. Their 2009 book, Connected: The Surprising Power of Our Social Networks and How They Shape Our Lives, made the case that social connections of up to three degrees of separation have a significant influence on such things as weight as well as on smoking habits, altruism and voting behaviours. Research in animals has been important in establishing the idea that a strong social bond—all by itself—may have evolutionary significance. Evolutionary theories are hard to prove. Many experiments designed to test these ideas require studying not just a single group or population but their descendants. Most animal species have shorter life spans than humans, however, making measuring generational change a simpler proposition. That can make it easier to tease out cause from correlation. In addition, findings that echo across species suggest biological rather than cultural origins. To date, horses, elephants, hyenas, monkeys, chimpanzees, whales and dolphins have all been shown to form social bonds that can last for years. Studies of our closest living relatives— monkeys and apes—have been especially groundbreaking. Seyfarth and Cheney have studied the same troop of baboons in Kenya for more than 30 years. When they began, primatologist Robert Hinde had already established that nonhuman primates had notable social relationships. One of the first things Seyfarth and Cheney did was use audio-playback experiments to show that baboons were aware of the relationships of others. When a group of female monkeys heard an offspring’s distress vocalisation, they often looked at the infant’s mother. That suggests that the social relationships were not just a figment of our human imagination.

Research in animals has been important in establishing the idea that a strong social bond—all by itself—may have evolutionary significance. Evolutionary theories are hard to prove. Many experiments designed to test these ideas require studying not just a single group or population but their descendants. Most animal species have shorter life spans than humans, however, making measuring generational change a simpler proposition. That can make it easier to tease out cause from correlation. In addition, findings that echo across species suggest biological rather than cultural origins. To date, horses, elephants, hyenas, monkeys, chimpanzees, whales and dolphins have all been shown to form social bonds that can last for years. Studies of our closest living relatives— monkeys and apes—have been especially groundbreaking. Seyfarth and Cheney have studied the same troop of baboons in Kenya for more than 30 years. When they began, primatologist Robert Hinde had already established that nonhuman primates had notable social relationships. One of the first things Seyfarth and Cheney did was use audio-playback experiments to show that baboons were aware of the relationships of others. When a group of female monkeys heard an offspring’s distress vocalisation, they often looked at the infant’s mother. That suggests that the social relationships were not just a figment of our human imagination. If evolution is steering various species, including our own, toward prosocial behaviour, it makes sense to seek evidence in the genome. Already genetic variation has been identified in people with disorders that affect social function, such as autism and schizophrenia. And some genes in the dopamine and serotonin pathways have been consistently linked with social traits. Genetics started with an understanding of how genes affect the structure and function of our bodies and then our minds. And now scientists are beginning to ask how genes affect the structure and function of our societies.

If evolution is steering various species, including our own, toward prosocial behaviour, it makes sense to seek evidence in the genome. Already genetic variation has been identified in people with disorders that affect social function, such as autism and schizophrenia. And some genes in the dopamine and serotonin pathways have been consistently linked with social traits. Genetics started with an understanding of how genes affect the structure and function of our bodies and then our minds. And now scientists are beginning to ask how genes affect the structure and function of our societies. As part of this work, in a 2012 paper in Nature, they even mapped the social network of the Hadza hunter-gatherers of Tanzania, who live essentially as humans did 10,000 years ago. Christakis and Fowler showed that the Hadza form networks with a mathematical structure just like humans living in modernised settings, suggesting something very fundamental about the structure of friendship.

As part of this work, in a 2012 paper in Nature, they even mapped the social network of the Hadza hunter-gatherers of Tanzania, who live essentially as humans did 10,000 years ago. Christakis and Fowler showed that the Hadza form networks with a mathematical structure just like humans living in modernised settings, suggesting something very fundamental about the structure of friendship. But of course, the most straightforward result of this work would be to spark a deeper appreciation of just how important our friends are in our life. Other individuals are in fact the source of some of our greatest joys. And now we know that they do not just make us happy—they help keep us alive. So time to celebrate the power and inportance of your friendships for the rest of this year – and well on into the future!

But of course, the most straightforward result of this work would be to spark a deeper appreciation of just how important our friends are in our life. Other individuals are in fact the source of some of our greatest joys. And now we know that they do not just make us happy—they help keep us alive. So time to celebrate the power and inportance of your friendships for the rest of this year – and well on into the future!

Researchers at the University of Texas at Austin put together a speed-dating pool of about 200 men and women. They also took photos of the participants, mimicking those found on online dating sites, and recorded short videos of the same individuals to see what kinds of first impressions people would form in each context. For each scenario, participants rated those they “met” on traits such as attractiveness, humour, intelligence and other qualities that we usually judge within seconds. The researchers presented their findings in January at the Society for Personality and Social Psychology meeting in San Diego.

Researchers at the University of Texas at Austin put together a speed-dating pool of about 200 men and women. They also took photos of the participants, mimicking those found on online dating sites, and recorded short videos of the same individuals to see what kinds of first impressions people would form in each context. For each scenario, participants rated those they “met” on traits such as attractiveness, humour, intelligence and other qualities that we usually judge within seconds. The researchers presented their findings in January at the Society for Personality and Social Psychology meeting in San Diego. In another new paper, in the Journal of Experimental Social Psychology, researchers asked whether contrast effects occur when judging personality. Participants viewed two dating profiles. When the first person came across as uncaring (“I get bored talking about feelings and stuff”), the second person, who was nice but unattractive, seemed much more appealing. In real profiles, people might not appear as blatantly callous as in this study, but other personality traits could be turnoffs that bias viewers’ later decisions.

In another new paper, in the Journal of Experimental Social Psychology, researchers asked whether contrast effects occur when judging personality. Participants viewed two dating profiles. When the first person came across as uncaring (“I get bored talking about feelings and stuff”), the second person, who was nice but unattractive, seemed much more appealing. In real profiles, people might not appear as blatantly callous as in this study, but other personality traits could be turnoffs that bias viewers’ later decisions. The researchers selected 100,000 users of a large online dating site and gave half of them the ability to browse anonymously, which usually costs extra. They became less inhibited and more likely to look at people of the same sex or a different race. The researchers thought the disinhibition would translate into more matches….but….women with this ability actually made fewer matches because they did not leave so-called weak signals of interest that might lead the other party to follow up. The simple notification that a particular person perused your profile is often enough to get a conversation started. Anonymous browsing did not affect men’s matches as much, because the men were already uninhibited—they messaged individuals who interested them. Women, however, are less likely in general to make the first move and therefore depend more on sending weak signals to invite flirtation.

The researchers selected 100,000 users of a large online dating site and gave half of them the ability to browse anonymously, which usually costs extra. They became less inhibited and more likely to look at people of the same sex or a different race. The researchers thought the disinhibition would translate into more matches….but….women with this ability actually made fewer matches because they did not leave so-called weak signals of interest that might lead the other party to follow up. The simple notification that a particular person perused your profile is often enough to get a conversation started. Anonymous browsing did not affect men’s matches as much, because the men were already uninhibited—they messaged individuals who interested them. Women, however, are less likely in general to make the first move and therefore depend more on sending weak signals to invite flirtation.

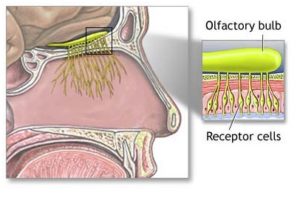

A damaged sense of smell, therefore, can indicate that the ability to make those connections has been hampered by disease, a lack of sleep or, as shown in Xydakis’s study, injury to the brain. The new results add to a growing understanding of the link between brain damage and an impaired sense of smell. Researchers have been working for years to use olfaction tests to track damage to the brain caused by neurodegenerative ailments such as Parkinson’s and Alzheimer’s diseases.

A damaged sense of smell, therefore, can indicate that the ability to make those connections has been hampered by disease, a lack of sleep or, as shown in Xydakis’s study, injury to the brain. The new results add to a growing understanding of the link between brain damage and an impaired sense of smell. Researchers have been working for years to use olfaction tests to track damage to the brain caused by neurodegenerative ailments such as Parkinson’s and Alzheimer’s diseases. The unique characteristics of our sense of smell make sniff tests ideal for diagnosing brain injury. Here are some of the most interesting scientific findings about this unusual sense:

The unique characteristics of our sense of smell make sniff tests ideal for diagnosing brain injury. Here are some of the most interesting scientific findings about this unusual sense: