DOWNLOAD THE ENTIRE JANUARY 2016 NEWSLETTER including this months Freebie.

At any given time, at least one in five adults reports being on a diet, but the majority don’t keep the weight off. A huge amount of scientific evidence tells us that dieting does not promote lasting weight loss. In fact, many dieters end up gaining back more weight after they quit.

At any given time, at least one in five adults reports being on a diet, but the majority don’t keep the weight off. A huge amount of scientific evidence tells us that dieting does not promote lasting weight loss. In fact, many dieters end up gaining back more weight after they quit.

When I say “diet,” I am referring to eating regimens that require cutting potions, severely restricting calories, or eliminating entire food groups: carbs, fats, sweets, whatever. Despite such deprivations, diets remain alluring because they offer a clear and quick prescription dictating what you should and should not eat. These tactics are meant to tame erratic eating behaviours and revise poor food choices. But the truth is that such strategies hardly ever work because they are too extreme and thus almost impossible to maintain over the long term.

In trawling through many papers & articles about losing weight it seems that the best advice from psychologists and researchers are: Do not diet. Do not cut out groups of foods or count calories. Do not try to eat very little or deprive yourself. Such strategies backfire because of psychological effects that every dieter is all too familiar with: intense cravings for foods you have eliminated, bingeing on junk food after falling off the wagon, an intense preoccupation with food. A growing body of research shows why these tendencies undermine most people’s diet efforts and confirms that the way around these pitfalls is moderation. Making small changes to your eating patterns, ones you can build on slowly over time, is truly the best pathway to lasting weight loss. Although you may have heard this message of moderation before, the evidence is finally too overwhelming to ignore.

Effective weight management is particularly important when we consider (most of the studies have been conducted in the States) that two thirds of Americans older than 20 are overweight or obese. With the rise in obesity rates and related health problems, such as diabetes and heart disease—both of which are leading causes of death in the U.S.—it has become even more critical for us all to approach weight loss armed with a keen understanding of what really works and what doesn’t. Let’s start with what doesn’t.

Why Typical Diets Fail

- The “what the hell effect”

Studies have consistently revealed that dieting usually leads to weight gain, not weight loss. In a 2013 review published online in Frontiers in Psychology, investigators reported that 15 of 20 studies showed that dieting predicted weight gain in adolescents and adults of normal weight.

One problem with diets is that once you give into temptation after restricting yourself, you are more likely to binge. This tendency, which psychologists dub the “what the hell effect,” undermines attempts to lose weight. A 2010 study by psychologists at the University of Toronto demonstrated this effect in people who believed they had broken their diet. In the study, 106 female students—some of whom were dieting and some of whom were not—all received identical slices of pizza. Some of the students saw a person carrying another slice that was either bigger or smaller than the one they got, and others did not see another slice. After they ate the pizza, the participants were asked to taste-test a range of cookies. Women who weren’t dieting and dieters who thought they had eaten a smaller than usual slice or who didn’t see a comparison slice ate a small amount of cookies. But dieters who thought they had violated their diet by eating a bigger slice ate more cookies than everyone else.

The researchers suggest that these women believed they had already blown their diet—so what the hell, might as well pig out on cookies. This study and many others like it confirm that violating or even thinking you have gone off your diet is enough to abandon self-control.

- Ironic processing

Some diets promise you’ll avoid feelings of deprivation by letting you eat as much as you want of certain food groups while totally eliminating others. The trouble is that when you eliminate your favourite foods—a requirement of most weight-loss regimens—you develop a deeper longing for them. Vow to avoid pasta, and you will soon find yourself dreaming about the plate of spaghetti bolognese or a vegetarian lasagne.

Some diets promise you’ll avoid feelings of deprivation by letting you eat as much as you want of certain food groups while totally eliminating others. The trouble is that when you eliminate your favourite foods—a requirement of most weight-loss regimens—you develop a deeper longing for them. Vow to avoid pasta, and you will soon find yourself dreaming about the plate of spaghetti bolognese or a vegetarian lasagne.

Food preoccupation is an inevitable result of dieting. Psychologists call this phenomenon “ironic processing”—suppressing a thought makes it more salient. It became famous when the late social psychologist Daniel Wegner did a series of experiments—the white bear studies—in which he asked subjects to avoid all thoughts of a white bear. Guess what creature relentlessly prowled through their minds…

Many studies over the years have shown that people who try to eliminate food groups end up craving those foods intensely. One published last year confirms that finding and adds to mounting evidence that not only do people crave the forbidden food, they eat more of it when they get a chance. The study compared eating patterns in 23 normal-weight nondieters who restricted their intake of palatable foods, such as doughnuts and ice cream (again, American studies….), and 23 similar people who merely recorded their snack intake. The researchers found that participants who restricted themselves reported craving and eating more treats, whereas those who simply monitored their snacks did not. This growing line of research suggests that for most, eliminating foods entirely will backfire badly.

In fact, treating yourself to indulgences may help you avoid the pitfalls of craving and overeating forbidden foods. In a 2012 study, 144 obese men and women were put on a strict, low-calorie diet for 16 weeks. About half ate a regular breakfast—300 calories—and the rest consumed a larger breakfast— 600 calories—which included something sweet, such as a doughnut or chocolate (and ate less at dinner to make up for it). In the second half of the study, participants tried to maintain their meal plans on their own for 16 more weeks. The participants kept food diaries and continued to receive counselling from a dietician.

After the initial 16 weeks of close monitoring, the small breakfast group had lost a few more pounds than the large breakfast group (33 versus 30 pounds). But in the self-maintenance 16-week period, the small breakfast group regained 25 pounds, whereas the large breakfast group continued to shrink, dropping 15 additional pounds. Notably the small breakfast group reported increased cravings for sweets, fats and fast foods at the end of the study, whereas the large breakfast group reported reduced cravings in each category. Although eating dessert for breakfast is not necessarily the fastest or healthiest route to weight loss, these findings demonstrate that it is possible to have your cake and lose weight, too.

- Mental fatigue

Although efforts to change your eating behaviours require attention and record keeping, especially at the beginning, focusing too much energy on what you eat reduces your ability to do other, potentially more important things. Studies that examine the mental energy available to dieters versus nondieters consistently reveal that dieters have more difficulty learning new information, solving problems and exerting self-control.

Overthinking your food choices may also have negative consequences for your mental health. A 2010 study in Appetite looked at the mental toll of eating chocolate among dieters and nondieters. The nondieters were not particularly distracted by this indulgence, but the dieters could no longer think clearly, becoming consumed with thoughts, such as “Why did I eat that?” and “What should I eat later today to make up for eating that?’

Another experiment published in 2010 found that women who restricted their caloric intake and recorded what they ate exhibited elevated cortisol levels, a marker of biological stress. Even women who simply monitored their meals without trying to restrict calories reported feeling more stressed, and they ended up gaining weight. The bottom line is, for most people, that diets not only backfire, they also take a heavy toll on our physical and mental well-being.

What You Should Do

- Start with your head

If you want to improve your body, you must also improve your mind-set. Decades of research show that individuals who are dissatisfied with their bodies are less successful at losing weight. Studies also show that it is possible for anyone to learn to feel good about his or her body.

In a 2014 study, women with eating disorders, including some who binged or who were over-weight, received compassion-focused therapy— an approach aimed at reducing feelings of shame and improving self-esteem. Over the 12-week treatment, women who exhibited greater improvements in self-compassion and reductions in body shame were also more likely to develop better eating habits.

One simple way to improve your self-esteem, according to many findings, is to write positive affirmations on a regular basis. Happiness research has consistently shown that focusing on what you do like—“I have nice eyes”—and on health rather than appearance-related goals—“I want to run a 5K this year”—can help you develop a healthier mind-set and self-image.

One simple way to improve your self-esteem, according to many findings, is to write positive affirmations on a regular basis. Happiness research has consistently shown that focusing on what you do like—“I have nice eyes”—and on health rather than appearance-related goals—“I want to run a 5K this year”—can help you develop a healthier mind-set and self-image.

- Simple, slow and steady

When setting a weight loss goal, it is natural to want to accomplish it immediately. Yesterday please rather than tomorrow! But to maintain a more svelte figure, you need to make gradual, sustainable changes to your diet: for example, drinking less alcohol and juice, substituting diet drinks for (sparkling) water, and eating dessert on four nights a week instead of seven. Making even small changes such as these may sound like a “diet,” which I have just told you to avoid, but it is not, for one important reason: this slow, steady approach allows you to adjust to a new routine at your own pace without the intense effort and denial that typical diet plans require.

Apparently most people trying to lose between 2 and 20 kg will benefit from this slow-to-moderate approach to weight loss, but it is important to note that individuals whose health is at serious risk because of obesity will likely need more drastic measures and should consult a doctor.

A large body of research supports the idea that making simple, gradual changes to your eating patterns is the best way to promote lasting weight loss. Robust evidence for this approach comes from a 2008 study, which demonstrated that overweight and obese adults who made very modest changes to their daily calorie intake and physical activity levels lost four times more weight than those following regimens that involved more extreme calorie restriction. The moderate group lost 10 pounds in one month, and they sustained the weight loss over the next three months.

In support of this approach, a 2015 study published in PLOS ONE found that women who successfully modified their diet and exercise habits over time set small, achievable behaviour change goals, had realistic expectations about their weight loss and were internally motivated to lose weight. The women who relapsed or failed to change their habits tended to have unrealistic expectations, lower motivation and self-confidence, and less satisfaction with their progress.

Some of the most compelling data on effective weight-loss strategies comes from the National Weight Control Registry (NWCR), which surveyed more than 4,000 people who lost at least 14 kg and kept it off for at least a year. The best tactics, according to the seminal 2006 report, included self-monitoring, such as limiting certain foods, keeping track of portion sizes and calories, planning meals and incorporating exercise into the daily routine.

Some of the most compelling data on effective weight-loss strategies comes from the National Weight Control Registry (NWCR), which surveyed more than 4,000 people who lost at least 14 kg and kept it off for at least a year. The best tactics, according to the seminal 2006 report, included self-monitoring, such as limiting certain foods, keeping track of portion sizes and calories, planning meals and incorporating exercise into the daily routine.

Such advice may appear to conflict with the research I described earlier on the pitfalls of restriction and mental fatigue, but the truth seems to be (based on the research) that, to lose weight, it is important to find the right balance. For instance, before making changes to your diet, you need to understand your current eating patterns, which may require considerable thought and attention. Most overweight individuals, when they are not trying to diet, tend to eat erratically— consuming junk food, snacking a lot and indulging cravings on a whim. Becoming aware of these habits, the good and bad, will allow you to tailor them.

As you begin to make small tweaks to your daily eating, start to plan a few meals you like that you can cycle through on a regular basis, so you don’t have to think too hard about what you’re going to eat every day. According to the NWCR data, people who plan their meals are 1.5 times more likely to maintain their weight loss. The NWCR data also show that limiting the variety of foods you eat can help you sustain your weight. You don’t have to eat the same foods every day, but generally reducing the array of options makes grocery shopping less stressful.

- Work it out

We all know by now that exercise is essential for all-around health. Yet study after study shows that working out is not terribly effective for weight loss on its own. When combined with better eating habits, however, exercise appears to help people slim down. A 2012 study looked at the effects of diet or exercise, or both or neither, in a group of overweight or obese post-menopausal women. Dieters could consume between 1,200 to 2,000 calories a day, depending on their initial weight, and exercisers ramped up to 45 minutes or more of cardio five days a week. After 12 months, those in the combined diet and exercise group lost the most weight— about 19.5 pounds (8.9 kg)—although the diet-only group was not far behind, losing 15.8 pounds (7.2 kg). Those who only exercised lost 4.4 pounds (2kg) and the control group, who didn’t exercise or eat differently, lost 1.5 pounds (0.7 kg) over the year.

Once your goal weight is achieved, exercise may be crucial for keeping the scale steady. Most people who have slimmed down report that routine physical activity is an important part of their maintenance regimen. Exercise has many physiological benefits; it even appears to moderate the brain’s reaction to pleasurable foods. In a small 2012 study, overweight or obese participants underwent an initial brain scan while looking at images of food. Then they were put on a six-month exercise regimen. At six months, the exercisers showed decreased activity in the insula, which regulates emotions, in response to images of palatable treats. They did not, however, report changes in dietary restraint, food cravings or hunger, suggesting that the neural effects are subtle—perhaps helpful during weight maintenance but not strong enough to induce weight loss.

Once your goal weight is achieved, exercise may be crucial for keeping the scale steady. Most people who have slimmed down report that routine physical activity is an important part of their maintenance regimen. Exercise has many physiological benefits; it even appears to moderate the brain’s reaction to pleasurable foods. In a small 2012 study, overweight or obese participants underwent an initial brain scan while looking at images of food. Then they were put on a six-month exercise regimen. At six months, the exercisers showed decreased activity in the insula, which regulates emotions, in response to images of palatable treats. They did not, however, report changes in dietary restraint, food cravings or hunger, suggesting that the neural effects are subtle—perhaps helpful during weight maintenance but not strong enough to induce weight loss.

Incorporating exercise into your life should be a gradual process. You don’t have to run marathons to reap psychological and physical rewards. Going for a lunchtime walk or biking to work is a way to integrate activity into your daily routine. You can also increase your movements in small ways by taking the stairs instead of the lift or washing your car instead of driving through the car wash. Being disciplined is important, but making exercise fun and sustainable is also essential.

- Don’t do it alone

Receiving social support is key to losing weight. Consulting a doctor or nutritionist is one way to elicit support and provide greater accountability.

Research also demonstrates the role romantic partners play in encouraging weight loss. Research has shown that men are better able to adopt and stick with healthier eating habits when they receive support and encouragement from their spouse. Similarly, friends, co-workers and online weight-loss buddies can keep you on track by offering inspiration, praise and partners in crime. More systematic help has been shown to be useful, too, such as becoming a member of Weight Watchers or other support groups or participating in the community of users of smart-phone apps such as MyFitnessPal and Lose It!

After decades of diet studies, we can no longer ignore the fact that the majority of evidence points toward these small, sustainable steps as the best way to lose weight. That message may not be as sexy or exciting as the latest fad diet, but the science is clear: moderation leads to changes that will last for the rest of your life. Creating good habits takes time, patience and resolve, and you will inevitably encounter setbacks along the way. But the key is to never give up—and in a few short months, you may find yourself on the road to the body and active way of life you’ve always dreamed about.

References:

■ Dietary and Physical Activity Behaviors among Adults Successful at Weight Loss Maintenance. Judy Kruger, Heidi Michels Blanck and Cathleen Gillespie

in International Journal of Behavioral Nutrition and Physical Activity, Vol. 3, Article No. 17. Published online July 19, 2006.

■ Dieting and Restrained Eating as Prospective Predictors of Weight Gain. Michael R. Lowe, Sapna D. Doshi, Shawn N. Katterman and Emily H. Feig in Frontiers in Psychology, Vol. 4, Article No. 577. Published online September 2, 2013.

■ Smart People Don’t Diet: How the Latest Science Can Help You Lose Weight Permanently. Charlotte N. Markey. Da Capo/Lifelong Books, 2014.

From Our Archives

Spending a lot of time on Facebook is linked to diminished well-being, according to many studies. Yet questions linger about cause and effect—perhaps people who are already lonely simply spend more time on social media. New studies reveal that Facebook can indeed affect mood and mental state, and whether the effect is positive or negative depends heavily on how a person interacts with his or her contacts. Several of the new findings reveal that when Facebook hurts, the underlying culprit is—you guessed it— envy.

Spending a lot of time on Facebook is linked to diminished well-being, according to many studies. Yet questions linger about cause and effect—perhaps people who are already lonely simply spend more time on social media. New studies reveal that Facebook can indeed affect mood and mental state, and whether the effect is positive or negative depends heavily on how a person interacts with his or her contacts. Several of the new findings reveal that when Facebook hurts, the underlying culprit is—you guessed it— envy. Those studies did not attempt to figure out why some people experienced envy and others did not, but other studies have found that the way a user interacts with Facebook may be crucial. For example, researchers at the University of Michigan and KU Leuven in Belgium tracked 173 students’ habits over time and found that passive use—browsing news feeds, for example—led to reduced well-being by increasing feelings of envy. Active use, such as posting and commenting, had no such effect, according to the two studies, published in April 2015 in the Journal of Experimental Psychology: General.

Those studies did not attempt to figure out why some people experienced envy and others did not, but other studies have found that the way a user interacts with Facebook may be crucial. For example, researchers at the University of Michigan and KU Leuven in Belgium tracked 173 students’ habits over time and found that passive use—browsing news feeds, for example—led to reduced well-being by increasing feelings of envy. Active use, such as posting and commenting, had no such effect, according to the two studies, published in April 2015 in the Journal of Experimental Psychology: General. We now have some clues about typical email response patterns, thanks to a recent study drawing on 16 billion emails sent by more than two million people. The participants were Yahoo Mail users who allowed their anonymised data to be used in what appears to be the largest-ever analysis of email behaviour. The researchers, based at the University of Southern California and Yahoo Labs, used algorithms to mine data about the times messages were sent and the number of words they contained, among other factors. Here are some of the surprising revelations:

We now have some clues about typical email response patterns, thanks to a recent study drawing on 16 billion emails sent by more than two million people. The participants were Yahoo Mail users who allowed their anonymised data to be used in what appears to be the largest-ever analysis of email behaviour. The researchers, based at the University of Southern California and Yahoo Labs, used algorithms to mine data about the times messages were sent and the number of words they contained, among other factors. Here are some of the surprising revelations:

At any given time, at least one in five adults reports being on a diet, but the majority don’t keep the weight off. A huge amount of scientific evidence tells us that dieting does not promote lasting weight loss. In fact, many dieters end up gaining back more weight after they quit.

At any given time, at least one in five adults reports being on a diet, but the majority don’t keep the weight off. A huge amount of scientific evidence tells us that dieting does not promote lasting weight loss. In fact, many dieters end up gaining back more weight after they quit. Some diets promise you’ll avoid feelings of deprivation by letting you eat as much as you want of certain food groups while totally eliminating others. The trouble is that when you eliminate your favourite foods—a requirement of most weight-loss regimens—you develop a deeper longing for them. Vow to avoid pasta, and you will soon find yourself dreaming about the plate of spaghetti bolognese or a vegetarian lasagne.

Some diets promise you’ll avoid feelings of deprivation by letting you eat as much as you want of certain food groups while totally eliminating others. The trouble is that when you eliminate your favourite foods—a requirement of most weight-loss regimens—you develop a deeper longing for them. Vow to avoid pasta, and you will soon find yourself dreaming about the plate of spaghetti bolognese or a vegetarian lasagne. One simple way to improve your self-esteem, according to many findings, is to write positive affirmations on a regular basis. Happiness research has consistently shown that focusing on what you do like—“I have nice eyes”—and on health rather than appearance-related goals—“I want to run a 5K this year”—can help you develop a healthier mind-set and self-image.

One simple way to improve your self-esteem, according to many findings, is to write positive affirmations on a regular basis. Happiness research has consistently shown that focusing on what you do like—“I have nice eyes”—and on health rather than appearance-related goals—“I want to run a 5K this year”—can help you develop a healthier mind-set and self-image. Some of the most compelling data on effective weight-loss strategies comes from the National Weight Control Registry (NWCR), which surveyed more than 4,000 people who lost at least 14 kg and kept it off for at least a year. The best tactics, according to the seminal 2006 report, included self-monitoring, such as limiting certain foods, keeping track of portion sizes and calories, planning meals and incorporating exercise into the daily routine.

Some of the most compelling data on effective weight-loss strategies comes from the National Weight Control Registry (NWCR), which surveyed more than 4,000 people who lost at least 14 kg and kept it off for at least a year. The best tactics, according to the seminal 2006 report, included self-monitoring, such as limiting certain foods, keeping track of portion sizes and calories, planning meals and incorporating exercise into the daily routine. Once your goal weight is achieved, exercise may be crucial for keeping the scale steady. Most people who have slimmed down report that routine physical activity is an important part of their maintenance regimen. Exercise has many physiological benefits; it even appears to moderate the brain’s reaction to pleasurable foods. In a small 2012 study, overweight or obese participants underwent an initial brain scan while looking at images of food. Then they were put on a six-month exercise regimen. At six months, the exercisers showed decreased activity in the insula, which regulates emotions, in response to images of palatable treats. They did not, however, report changes in dietary restraint, food cravings or hunger, suggesting that the neural effects are subtle—perhaps helpful during weight maintenance but not strong enough to induce weight loss.

Once your goal weight is achieved, exercise may be crucial for keeping the scale steady. Most people who have slimmed down report that routine physical activity is an important part of their maintenance regimen. Exercise has many physiological benefits; it even appears to moderate the brain’s reaction to pleasurable foods. In a small 2012 study, overweight or obese participants underwent an initial brain scan while looking at images of food. Then they were put on a six-month exercise regimen. At six months, the exercisers showed decreased activity in the insula, which regulates emotions, in response to images of palatable treats. They did not, however, report changes in dietary restraint, food cravings or hunger, suggesting that the neural effects are subtle—perhaps helpful during weight maintenance but not strong enough to induce weight loss.

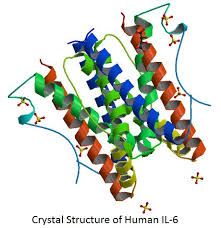

In the second experiment, 105 students completed online questionnaires designed to assess their tendency to experience several specific positive emotions. They later visited the lab to provide saliva samples. Joy, contentment, pride and awe were all associated with lower levels of IL-6, but awe was the only emotion that significantly predicted levels using a strict statistical test.

In the second experiment, 105 students completed online questionnaires designed to assess their tendency to experience several specific positive emotions. They later visited the lab to provide saliva samples. Joy, contentment, pride and awe were all associated with lower levels of IL-6, but awe was the only emotion that significantly predicted levels using a strict statistical test.

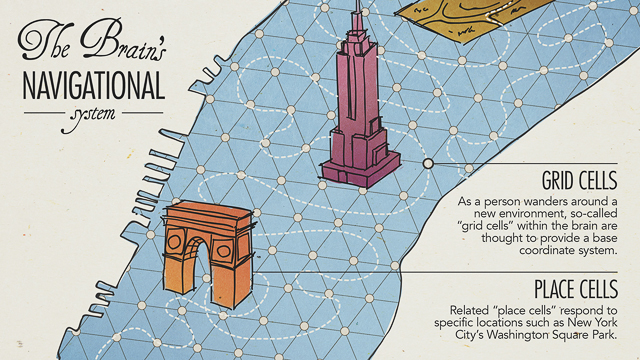

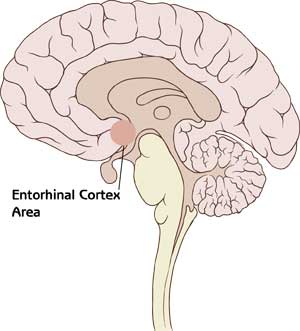

Interestingly, the more consistently the participants’ goal-direction signals were, the better they were able to correctly recall in which direction the target objects lay, potentially offering a brain-based explanation for the differences in navigational ability. Such results should be interpreted carefully, however. There are apparently many ways worse performance can lead to weaker effects; for instance, if a participant’s attention lapses, he or she will not only perform worse but also produce less relevant brain activity.

Interestingly, the more consistently the participants’ goal-direction signals were, the better they were able to correctly recall in which direction the target objects lay, potentially offering a brain-based explanation for the differences in navigational ability. Such results should be interpreted carefully, however. There are apparently many ways worse performance can lead to weaker effects; for instance, if a participant’s attention lapses, he or she will not only perform worse but also produce less relevant brain activity.

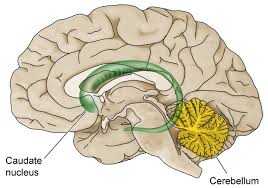

This elegant finding links intuition with the caudate nucleus, which is part of the basal ganglia—a set of interlinked brain areas responsible for learning, executing habits and automatic behaviours. The basal ganglia receive massive input from the cortex, the outer, rindlike surface of the brain. Ultimately these structures project back to the cortex, creating a series of cortical–basal ganglia loops. In one interpretation, the cortex is associated with conscious perception and the deliberate and conscious analysis of any given situation, novel or familiar, whereas the caudate nucleus is the site where highly specialised expertise resides that allows you to come up with an appropriate answer without conscious thought. In computer engineering parlance, a constantly used class of computations (namely those associated with playing a strategy game) is downloaded into special-purpose hardware, the caudate, to lighten the burden of the main processor, the cortex.

This elegant finding links intuition with the caudate nucleus, which is part of the basal ganglia—a set of interlinked brain areas responsible for learning, executing habits and automatic behaviours. The basal ganglia receive massive input from the cortex, the outer, rindlike surface of the brain. Ultimately these structures project back to the cortex, creating a series of cortical–basal ganglia loops. In one interpretation, the cortex is associated with conscious perception and the deliberate and conscious analysis of any given situation, novel or familiar, whereas the caudate nucleus is the site where highly specialised expertise resides that allows you to come up with an appropriate answer without conscious thought. In computer engineering parlance, a constantly used class of computations (namely those associated with playing a strategy game) is downloaded into special-purpose hardware, the caudate, to lighten the burden of the main processor, the cortex.

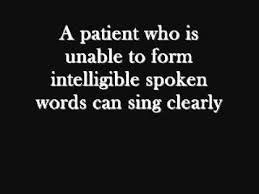

In the 1970s neurologist Martin Albert and speech pathologists Robert Sparks and Nancy Helm recognized the therapeutic implications of this ability and developed a treatment called melodic intonation therapy in which singing is a central element. During a typical session, patients will sing words and short phrases set to a simple melody while tapping out each syllable with their left hand. The melody usually involves two notes, perhaps separated by a minor third (such as the first two notes of “Greensleeves”). For example, patients might sing the phrase “How are you?” in a simple up-and-down pattern, with the stressed syllable (“are”) assigned a higher pitch than the others. As the treatment progresses, the phrases get longer and the frequency of the vocalizations increases, perhaps from one syllable per second to two.

In the 1970s neurologist Martin Albert and speech pathologists Robert Sparks and Nancy Helm recognized the therapeutic implications of this ability and developed a treatment called melodic intonation therapy in which singing is a central element. During a typical session, patients will sing words and short phrases set to a simple melody while tapping out each syllable with their left hand. The melody usually involves two notes, perhaps separated by a minor third (such as the first two notes of “Greensleeves”). For example, patients might sing the phrase “How are you?” in a simple up-and-down pattern, with the stressed syllable (“are”) assigned a higher pitch than the others. As the treatment progresses, the phrases get longer and the frequency of the vocalizations increases, perhaps from one syllable per second to two. Rhythm is the key to treatment of people with Parkinson’s, which affects roughly one in 100 older than 60. Parkinson’s arises from degeneration of cells in the midbrain that feed dopamine to the basal ganglia, an area involved in the initiation and smoothness of movements. The dopamine shortage in the region results in motor problems ranging from tremors and stiffness to difficulties in timing the movements associated with walking, facial expressions and speech.

Rhythm is the key to treatment of people with Parkinson’s, which affects roughly one in 100 older than 60. Parkinson’s arises from degeneration of cells in the midbrain that feed dopamine to the basal ganglia, an area involved in the initiation and smoothness of movements. The dopamine shortage in the region results in motor problems ranging from tremors and stiffness to difficulties in timing the movements associated with walking, facial expressions and speech. Perhaps the most fascinating interplay between music and the brain lies in the case files of people with autism spectrum disorder, a neurodevelopmental syndrome that occurs in 1 to 2 percent of children, most of whom are boys. Hallmarks of autism include impaired social interactions, repetitive behaviours and difficulties in communication. Indeed, up to 30 percent of people with autism cannot make the sounds of speech at all; many have limited vocabulary of any kind, including gesture.

Perhaps the most fascinating interplay between music and the brain lies in the case files of people with autism spectrum disorder, a neurodevelopmental syndrome that occurs in 1 to 2 percent of children, most of whom are boys. Hallmarks of autism include impaired social interactions, repetitive behaviours and difficulties in communication. Indeed, up to 30 percent of people with autism cannot make the sounds of speech at all; many have limited vocabulary of any kind, including gesture.

half a million people of various ages in 72 countries. They concluded that the happiness level throughout an individual’s life span frequently follows a U-shaped curve, bottoming out in the early to mid-40s. In some locations, this emotional nadir did not appear in the raw data but emerged once Blanchflower and Oswald reanalysed the numbers to consider the potentially confounding contributions of marital status, income, education and other factors on contentment.

half a million people of various ages in 72 countries. They concluded that the happiness level throughout an individual’s life span frequently follows a U-shaped curve, bottoming out in the early to mid-40s. In some locations, this emotional nadir did not appear in the raw data but emerged once Blanchflower and Oswald reanalysed the numbers to consider the potentially confounding contributions of marital status, income, education and other factors on contentment. oblems and fewer children—reported emotional suffering. Overall, most parents reported positive feelings, such as pride at having been successful in raising their children so that they could move out.

oblems and fewer children—reported emotional suffering. Overall, most parents reported positive feelings, such as pride at having been successful in raising their children so that they could move out.